James Prather, Ph.D.

I care about people and their experience with technology. I've been an award-winning UX teacher and researcher since 2013.

View my ACU Faculty Profile and my Google Scholar page.

Portfolio

Click the cards below to read detailed case studies.

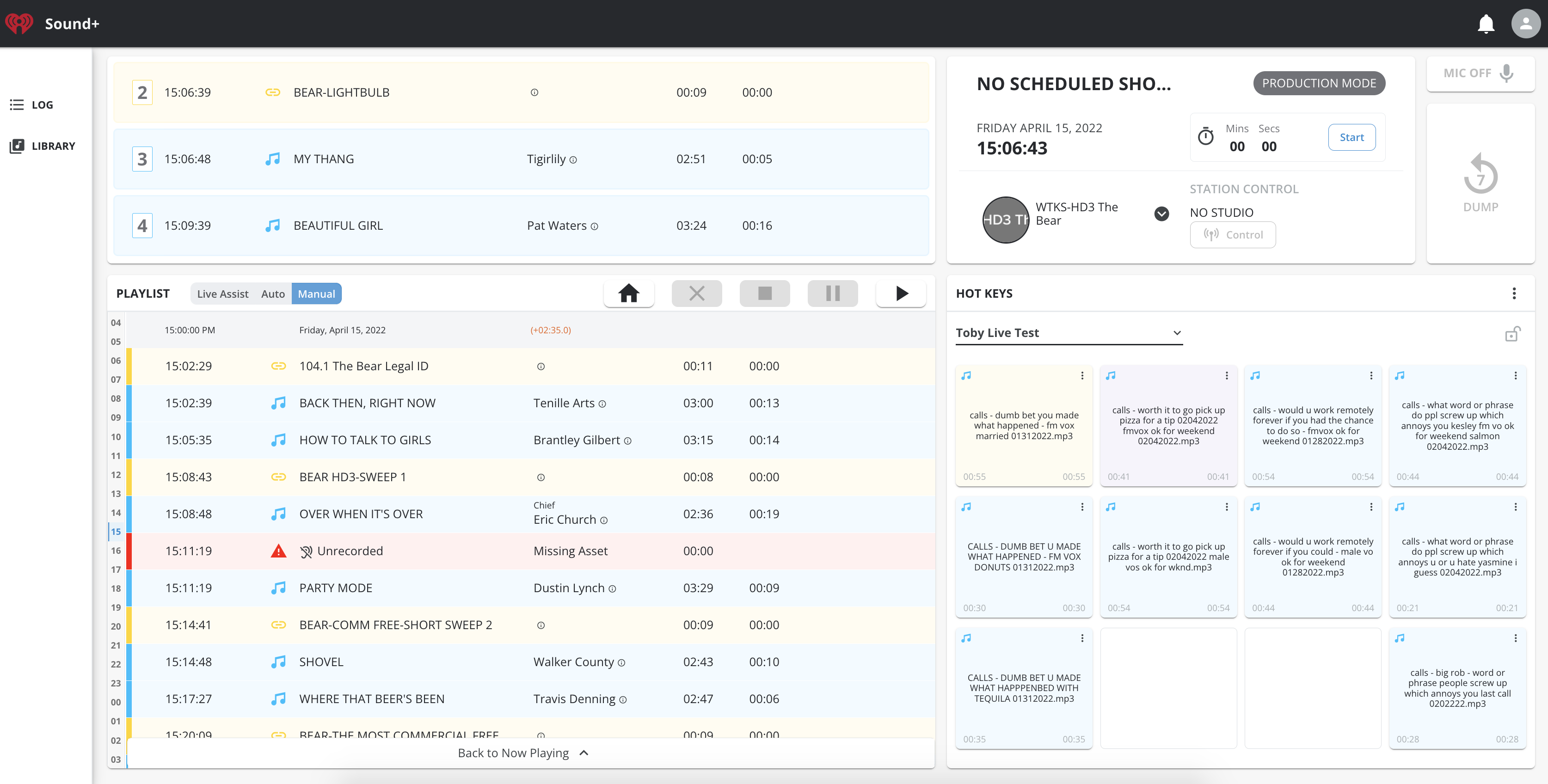

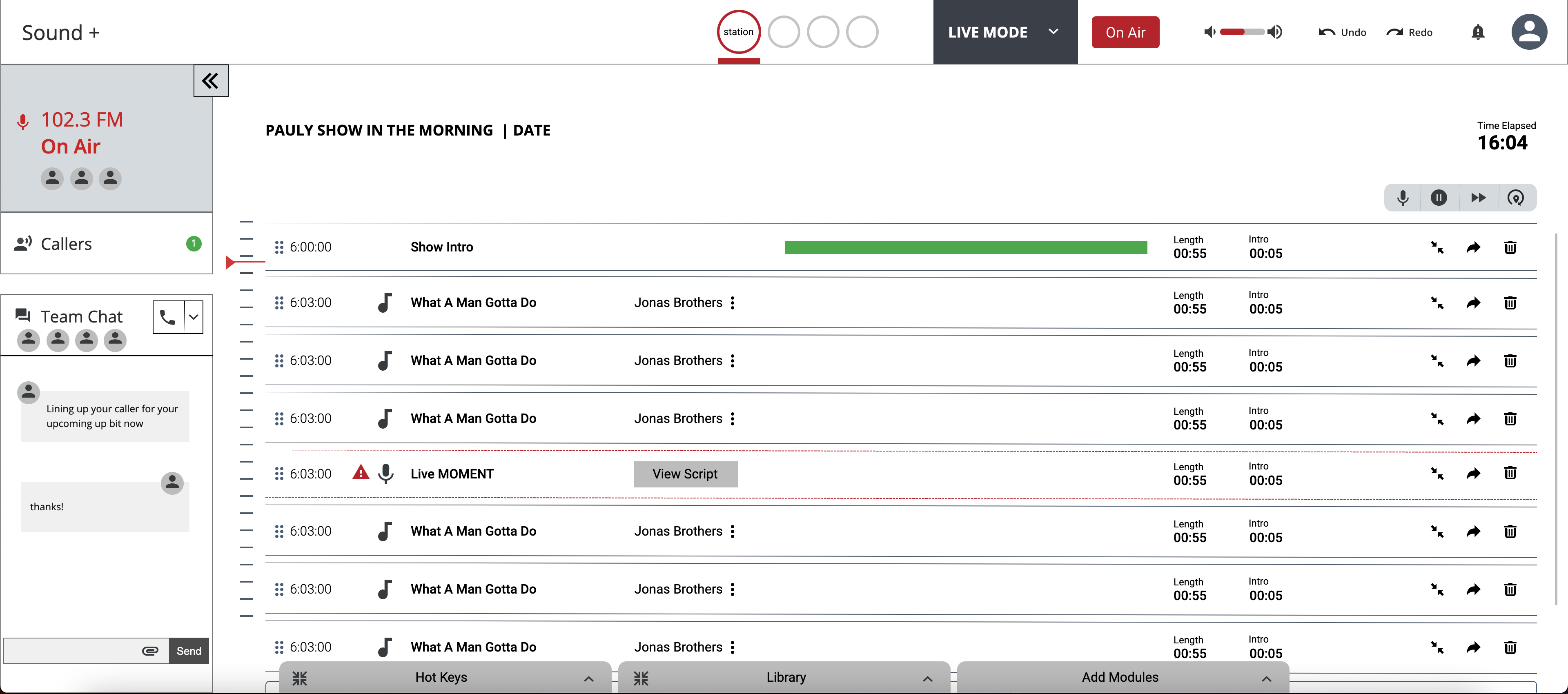

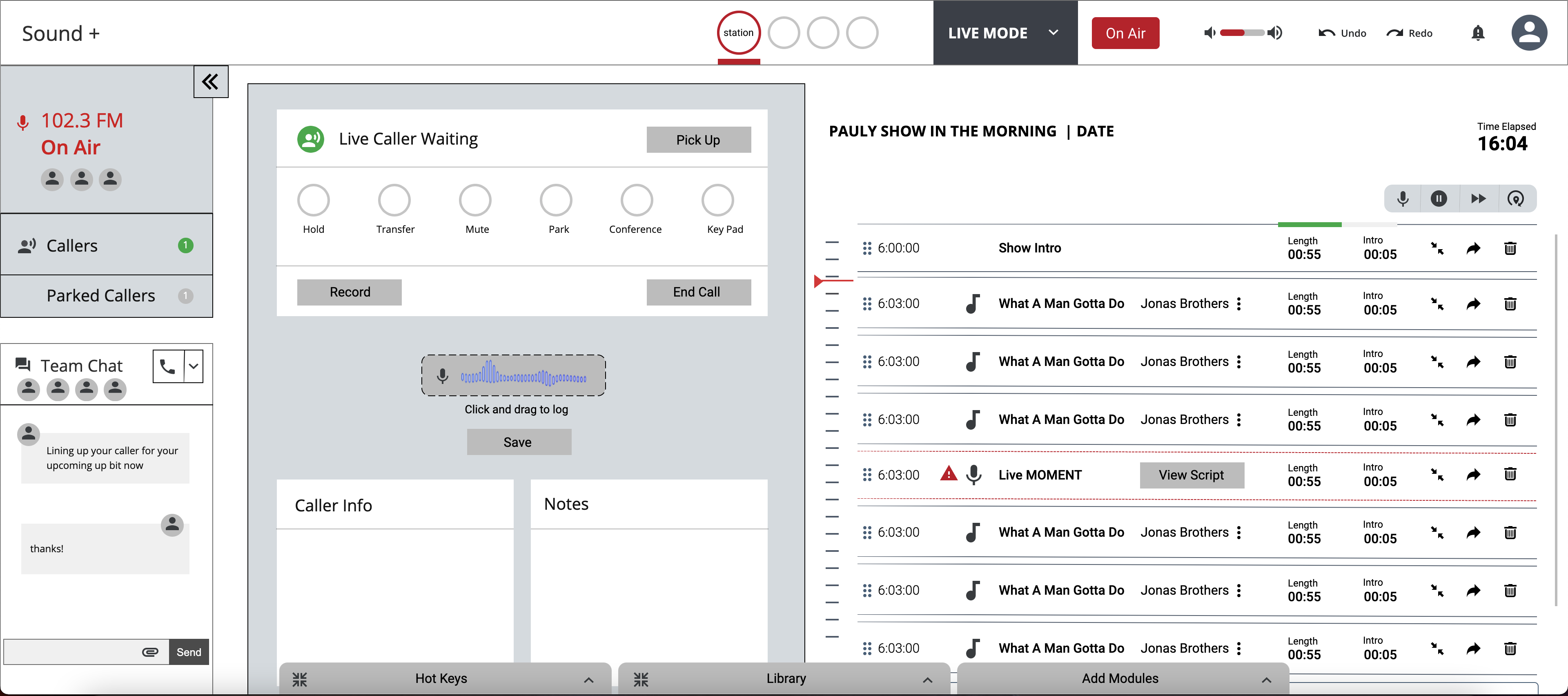

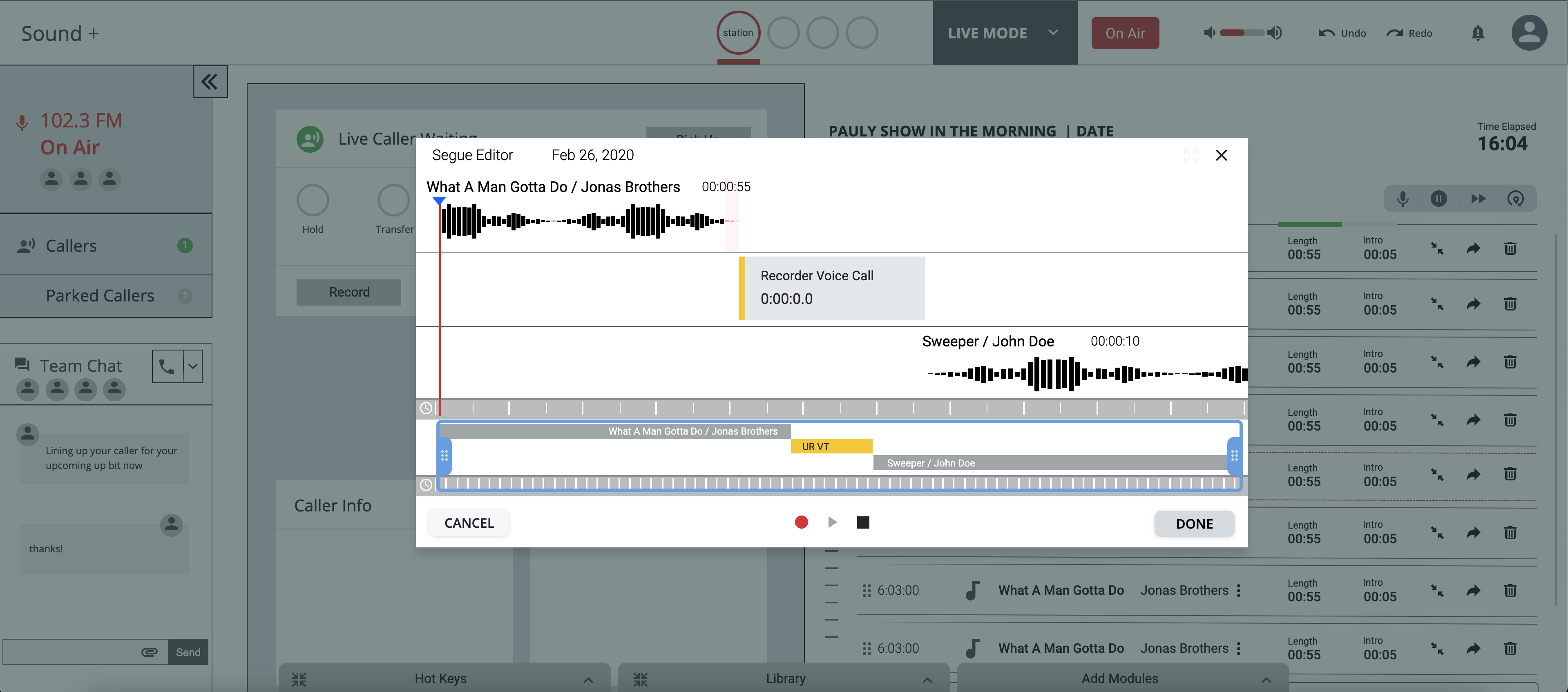

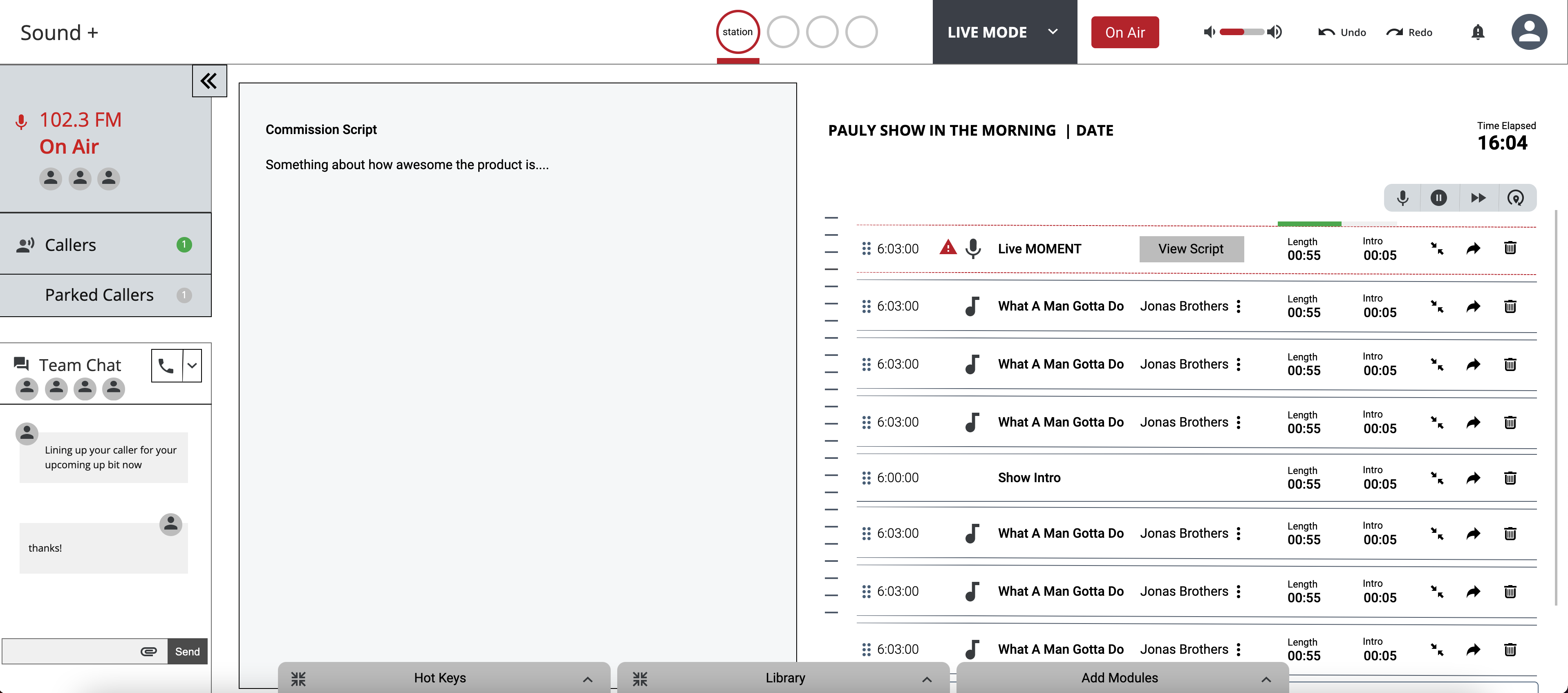

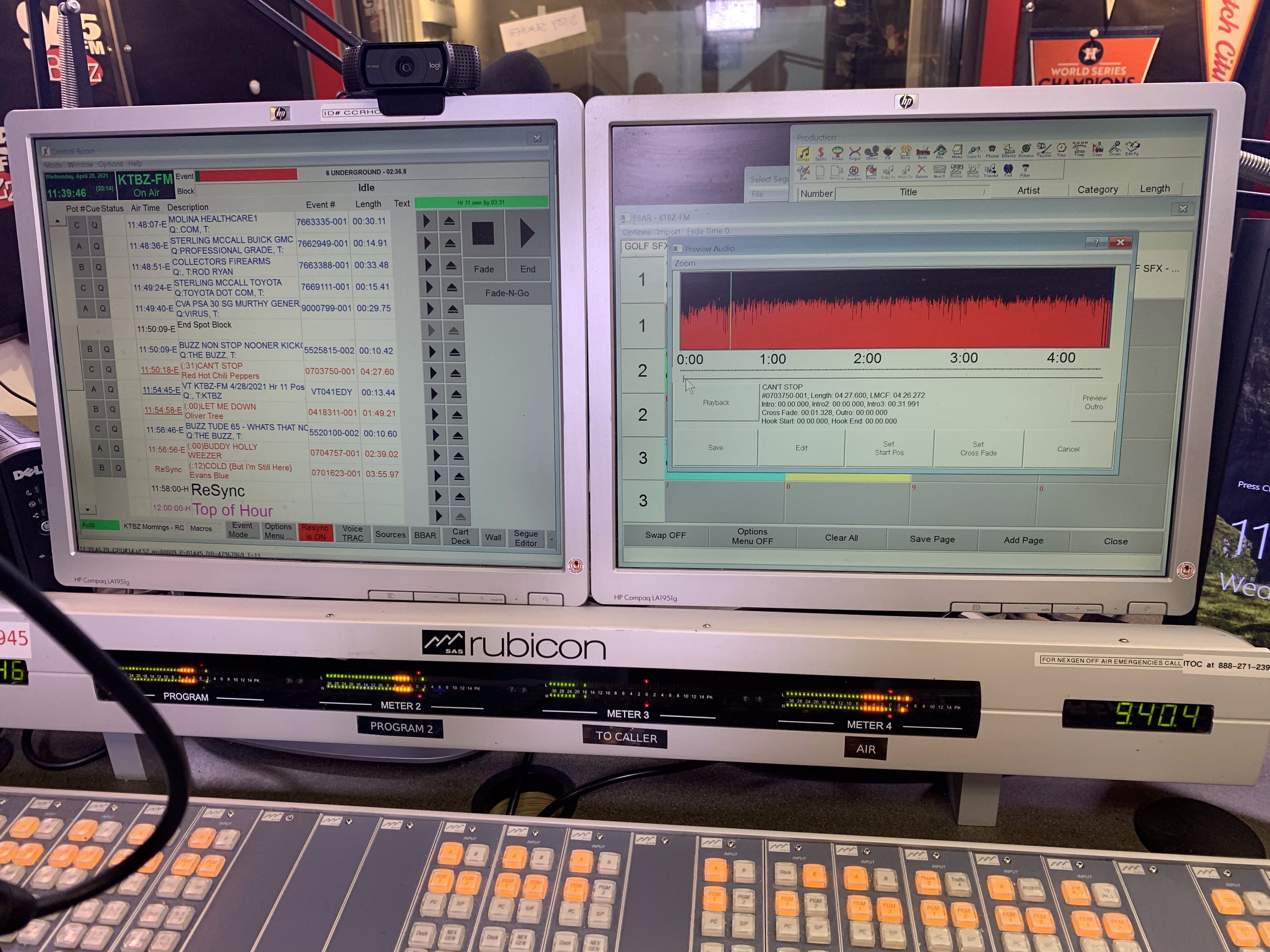

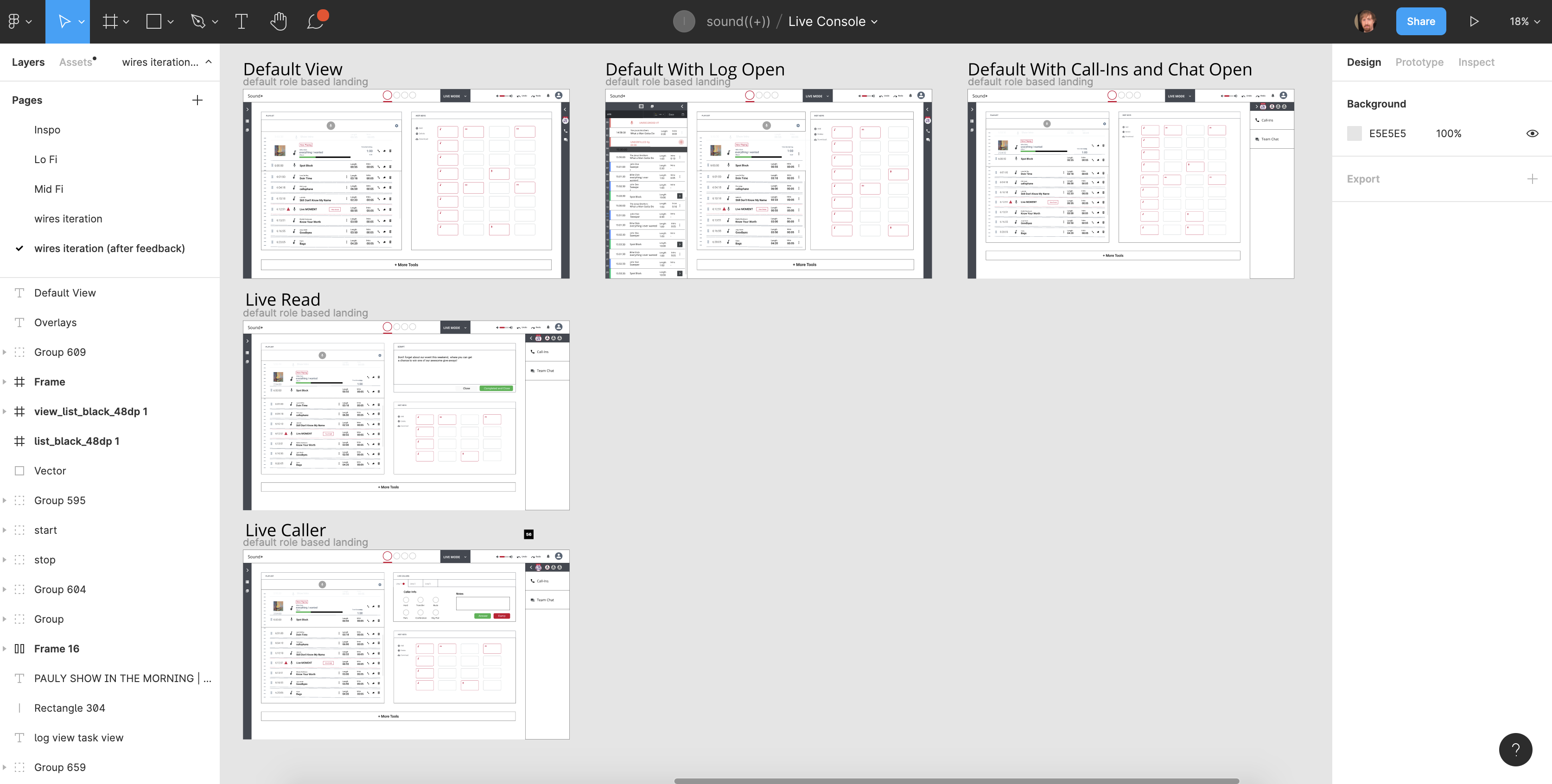

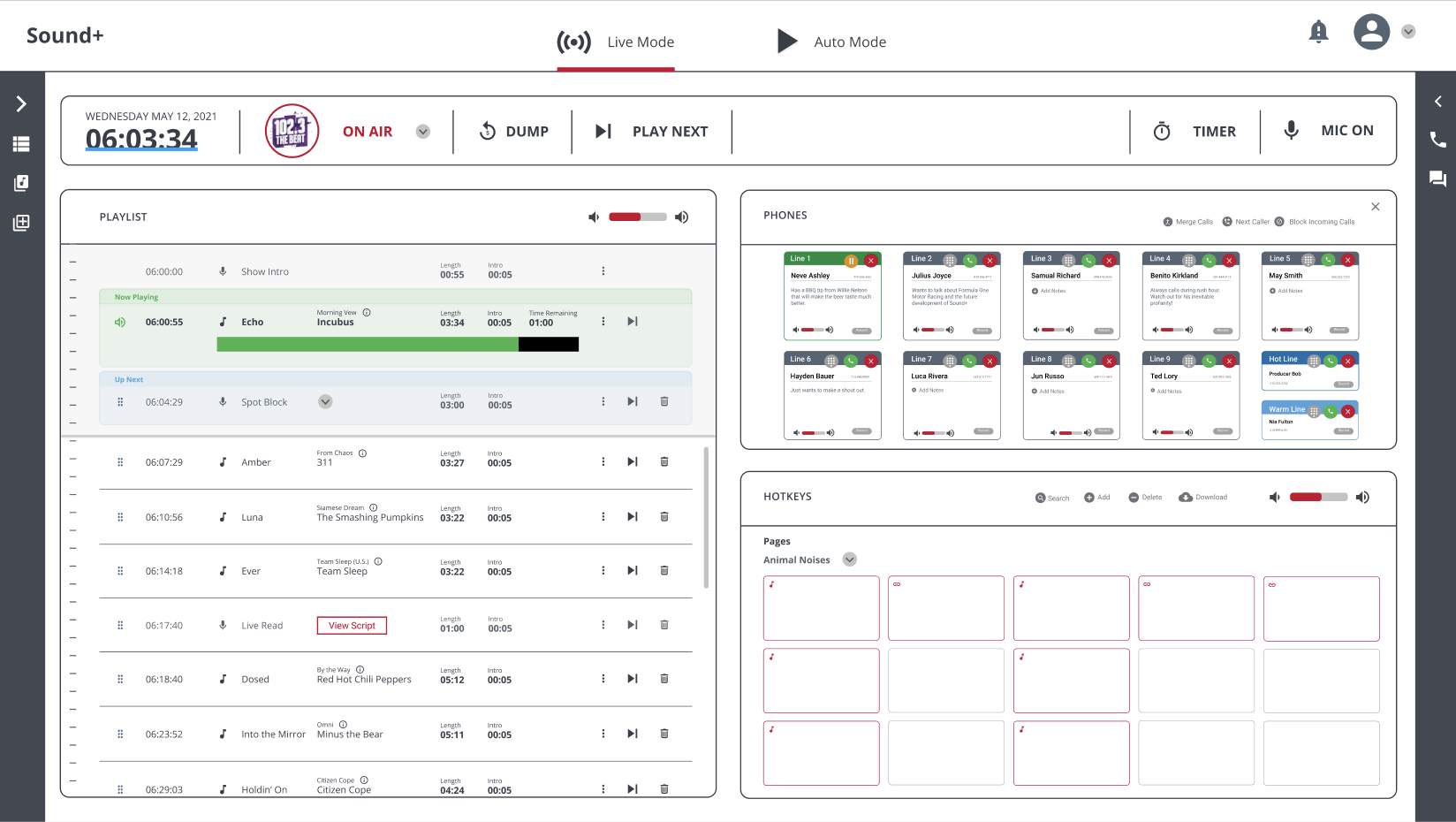

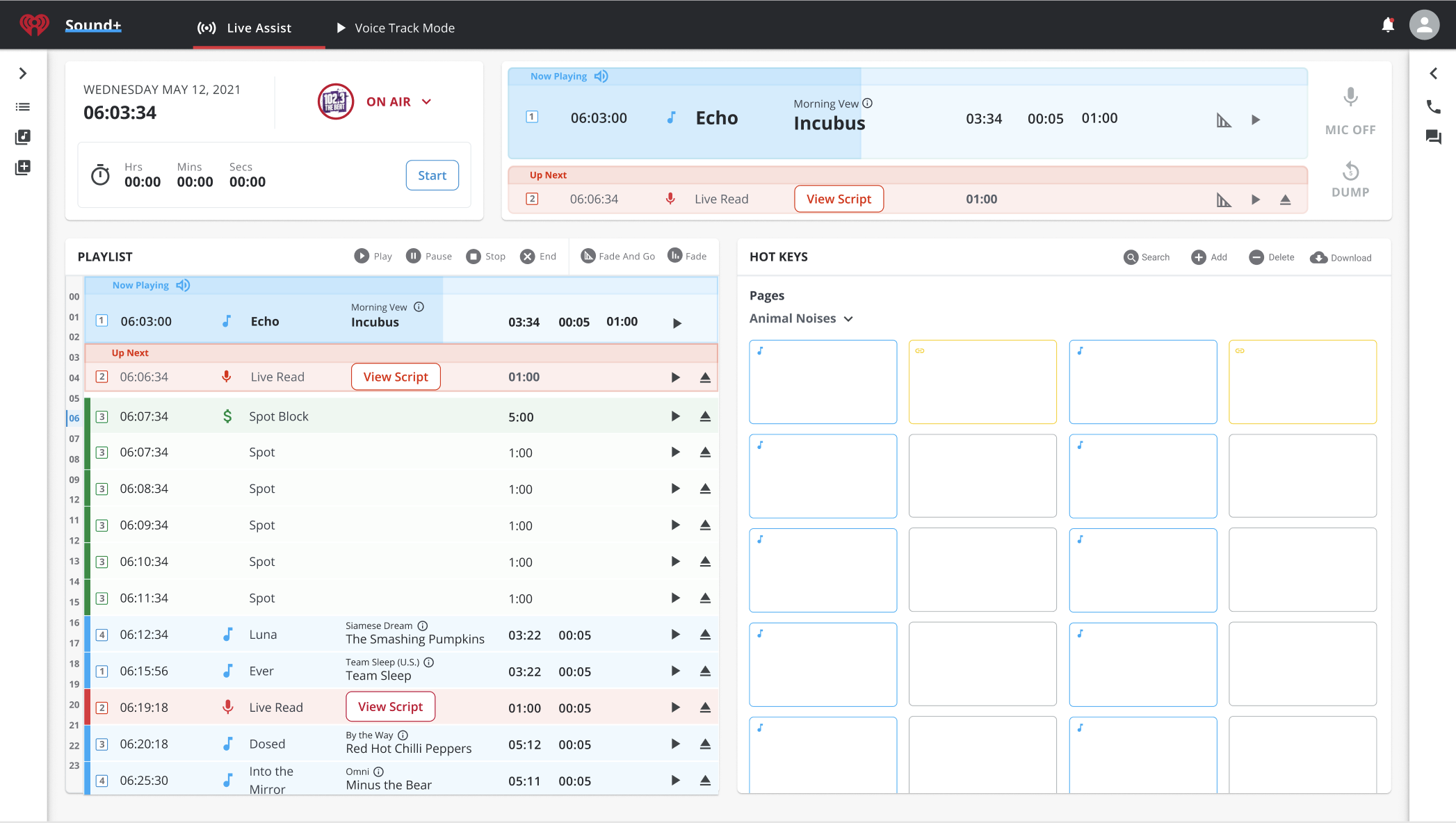

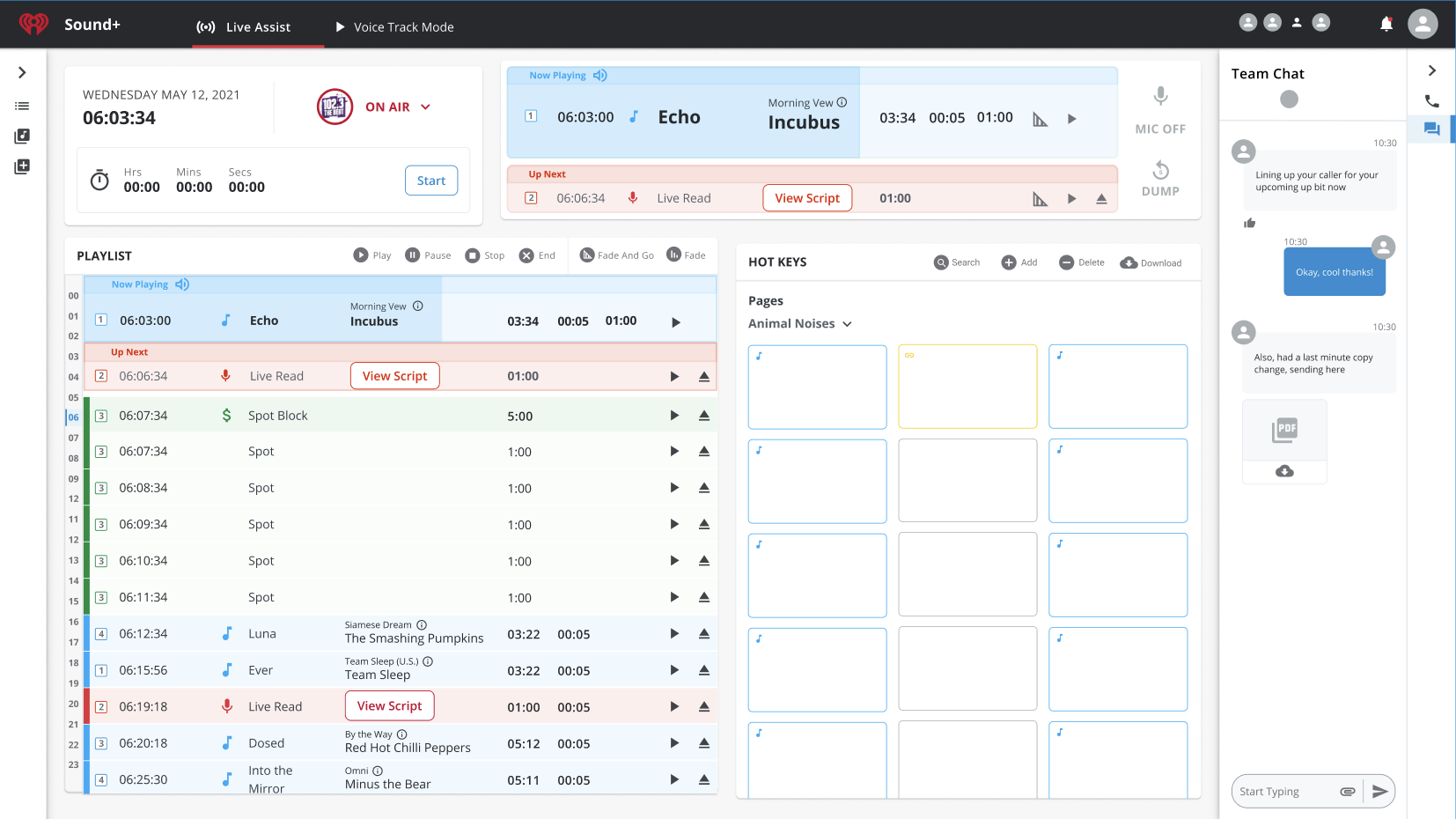

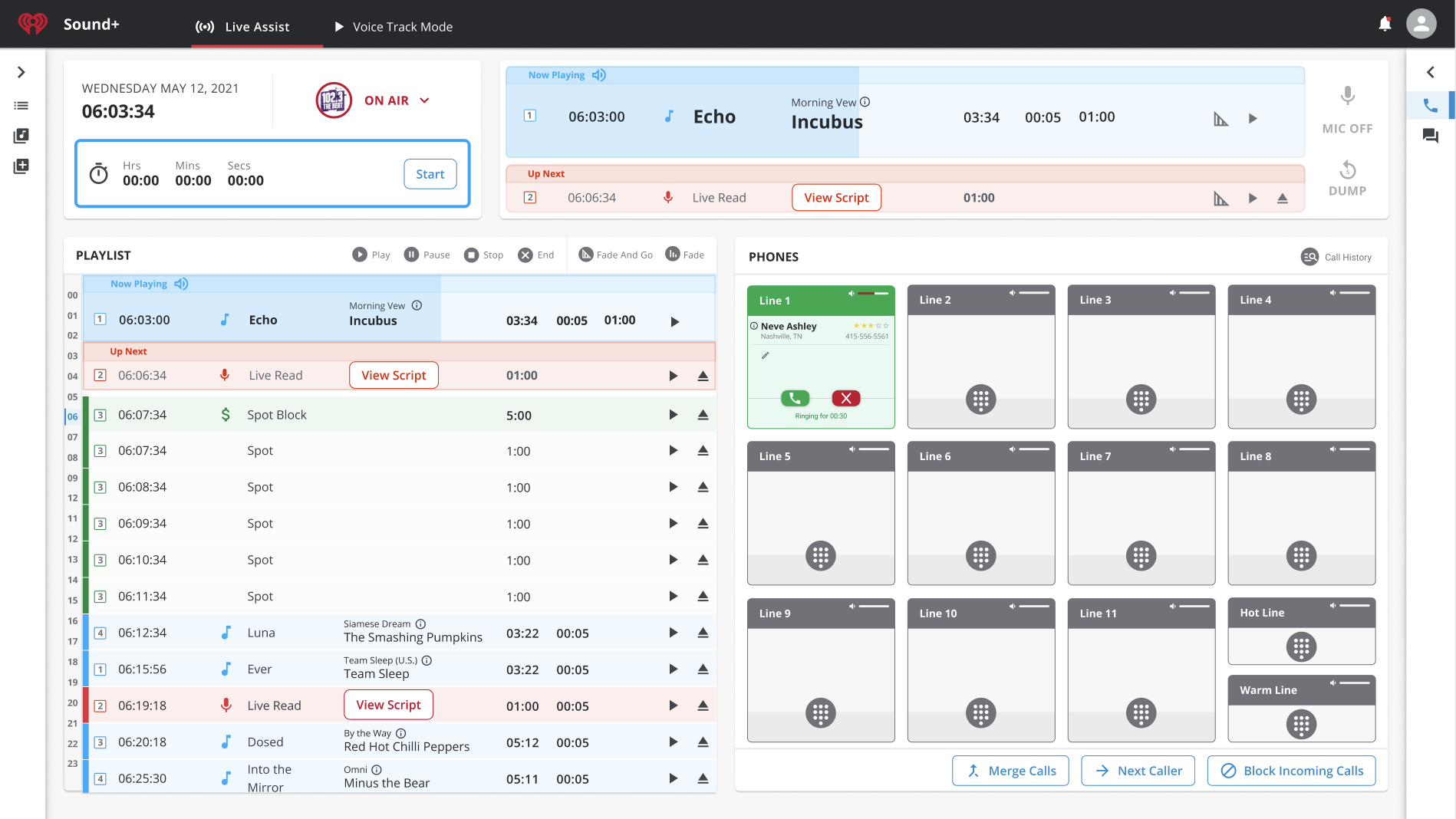

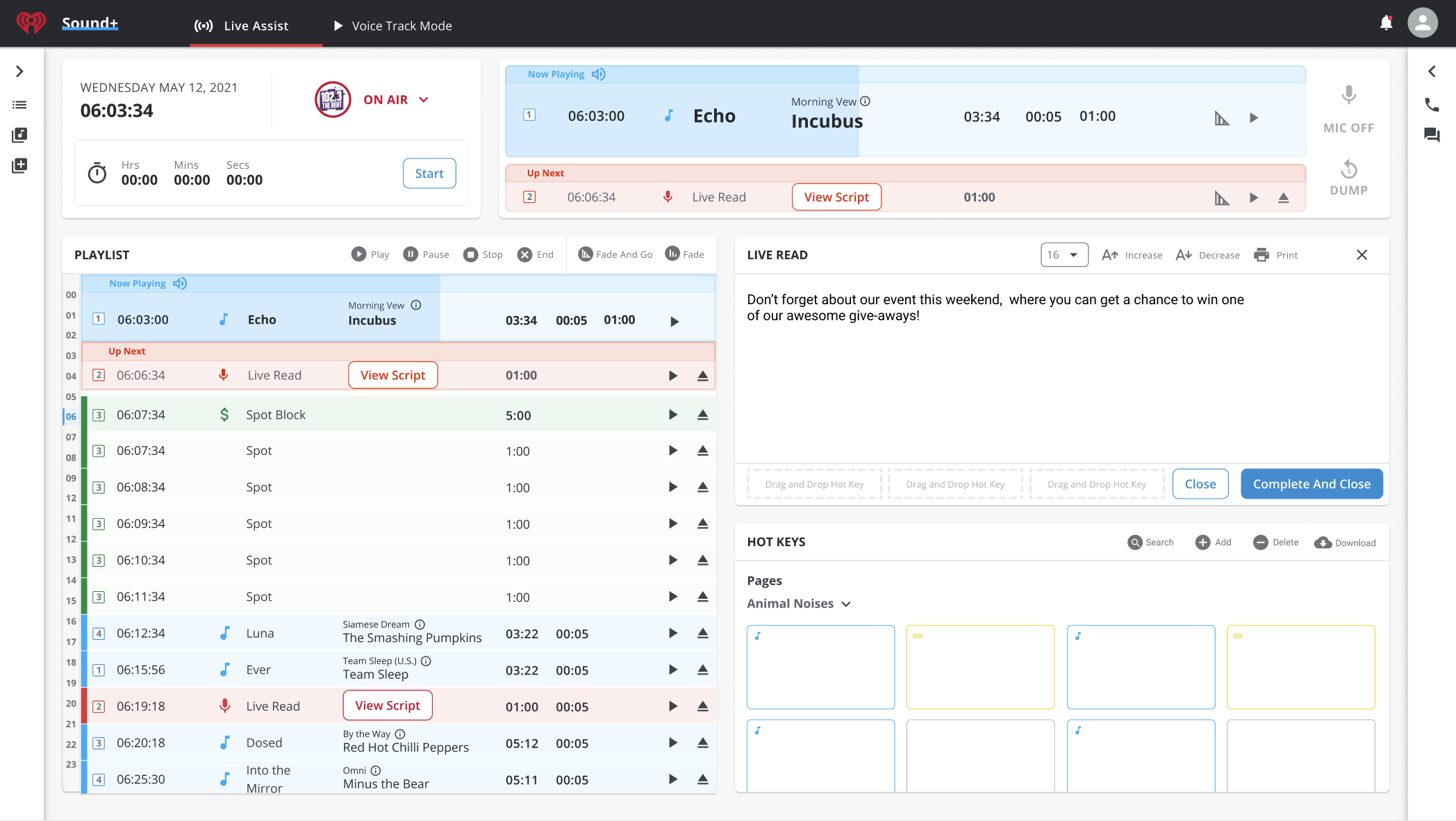

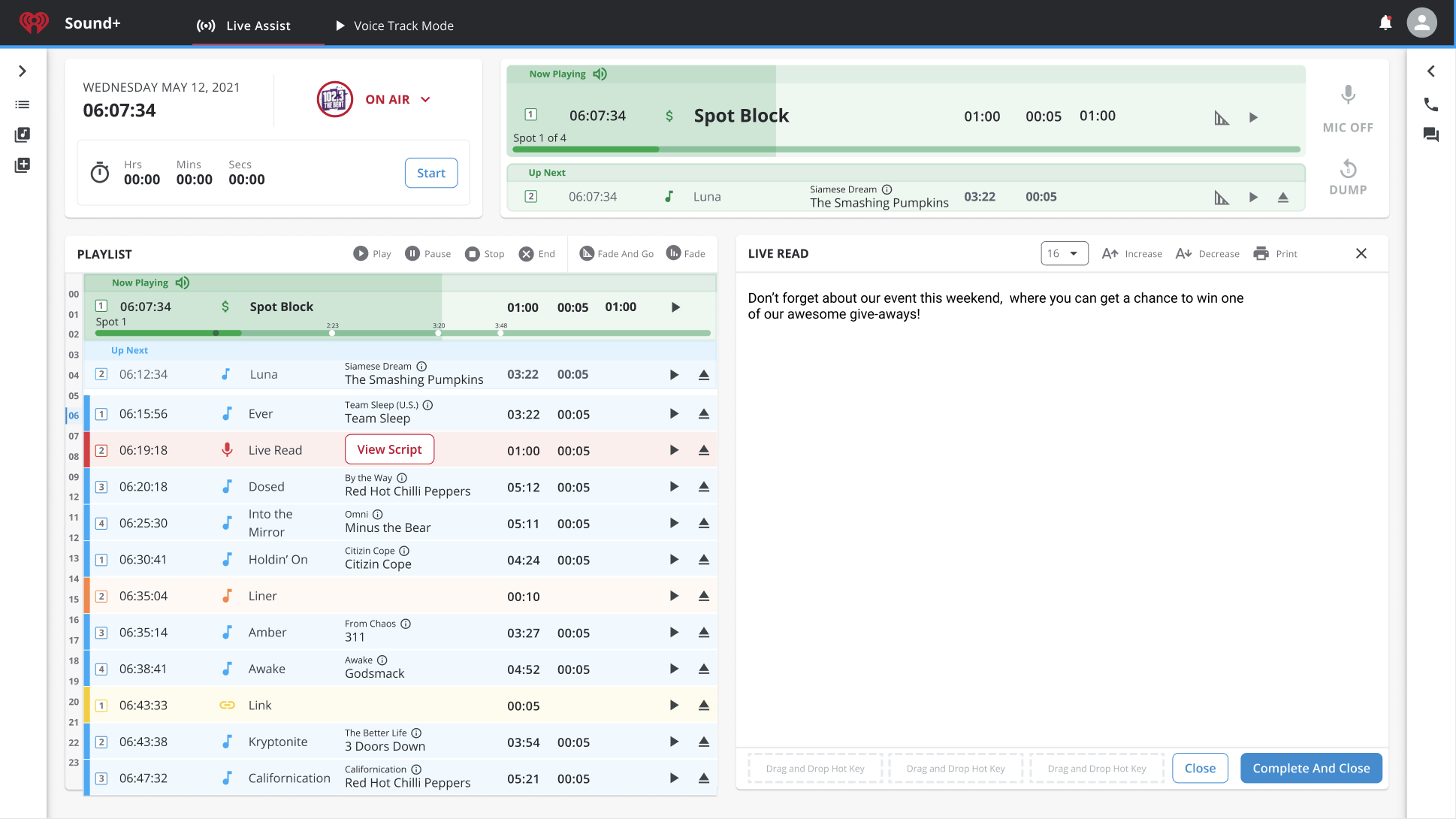

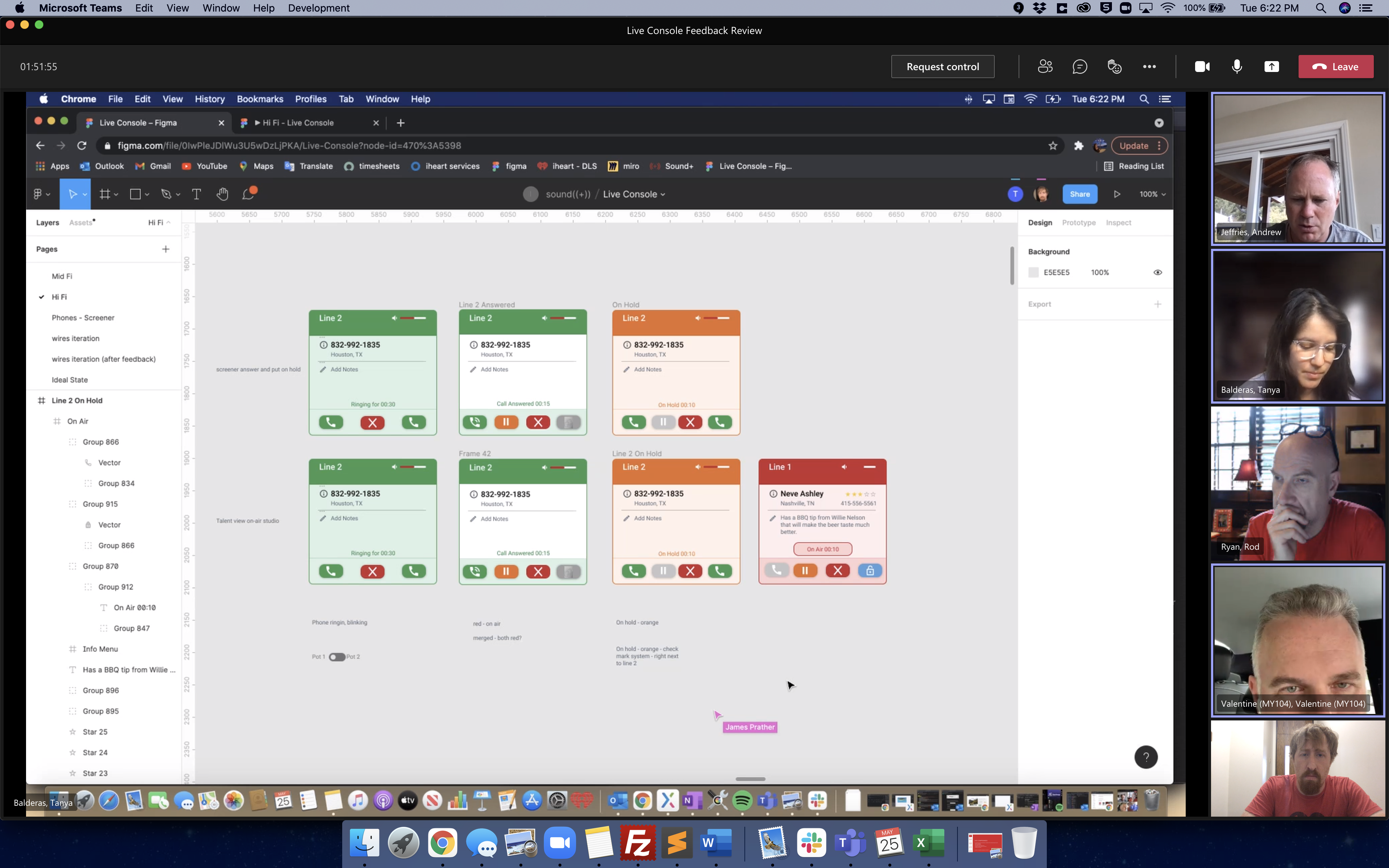

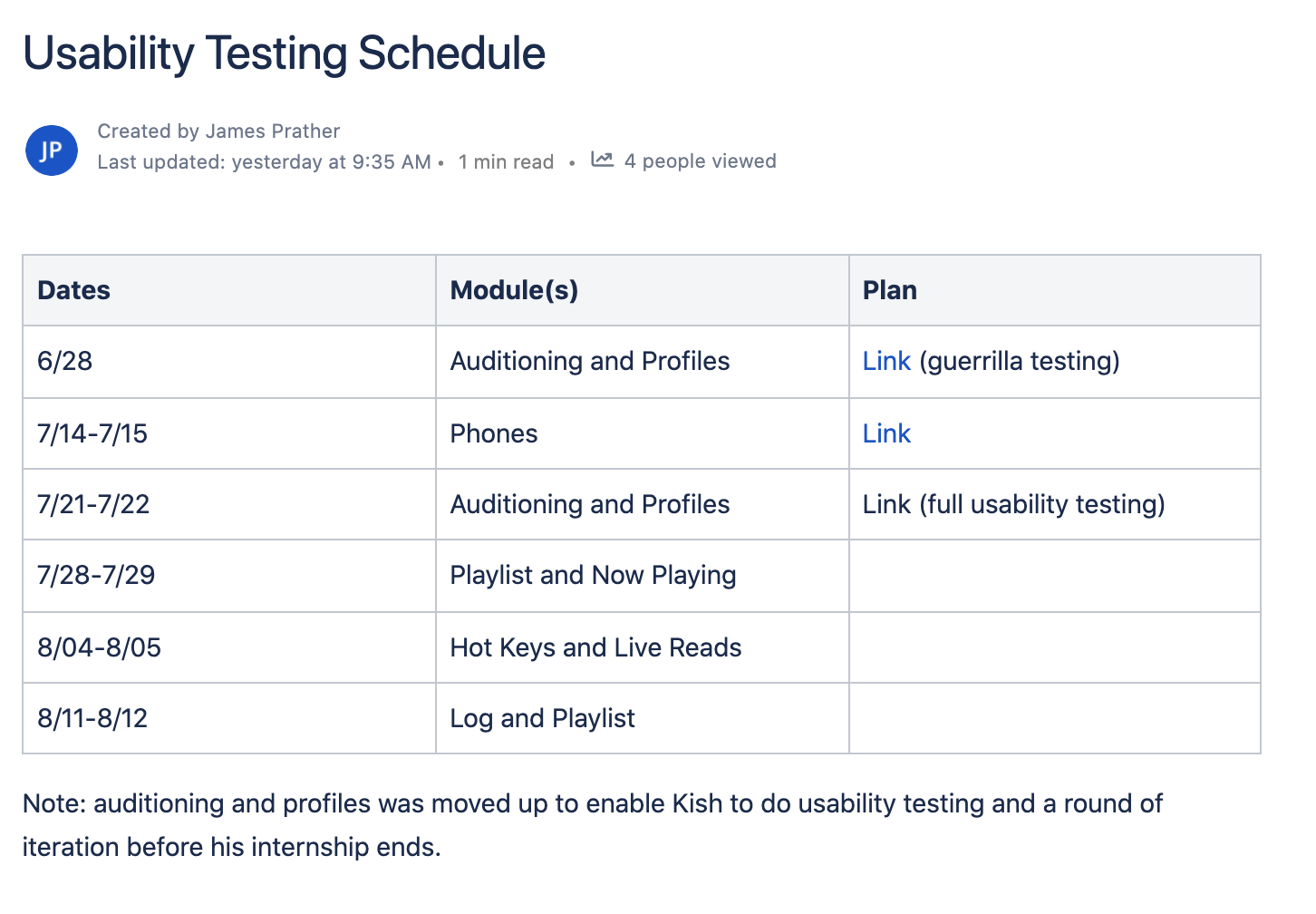

iHeart Media - Sound+ (2022)

Redesigning the live studio experience.

Featuring: interviews, ethnography, and usability testing.

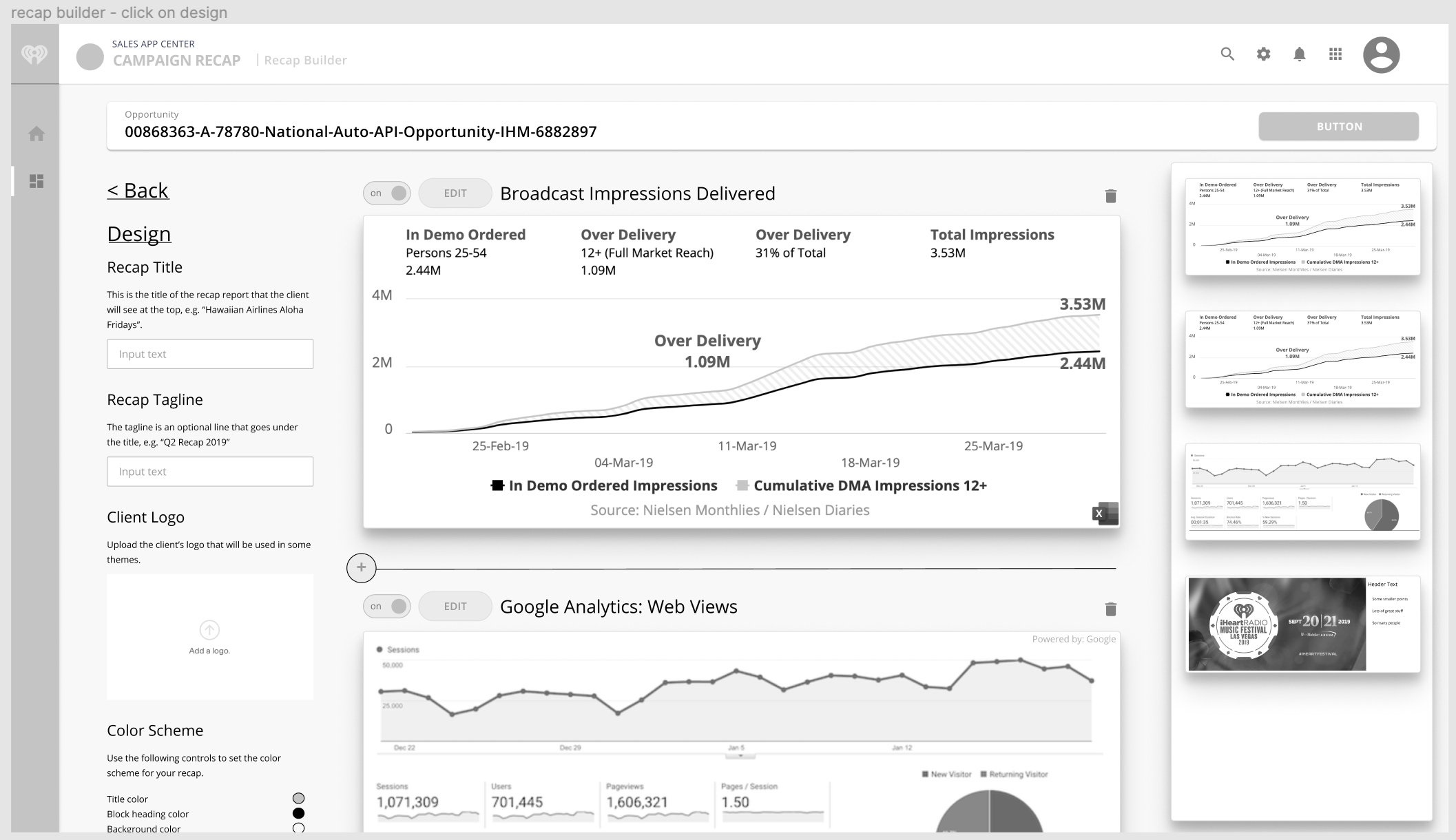

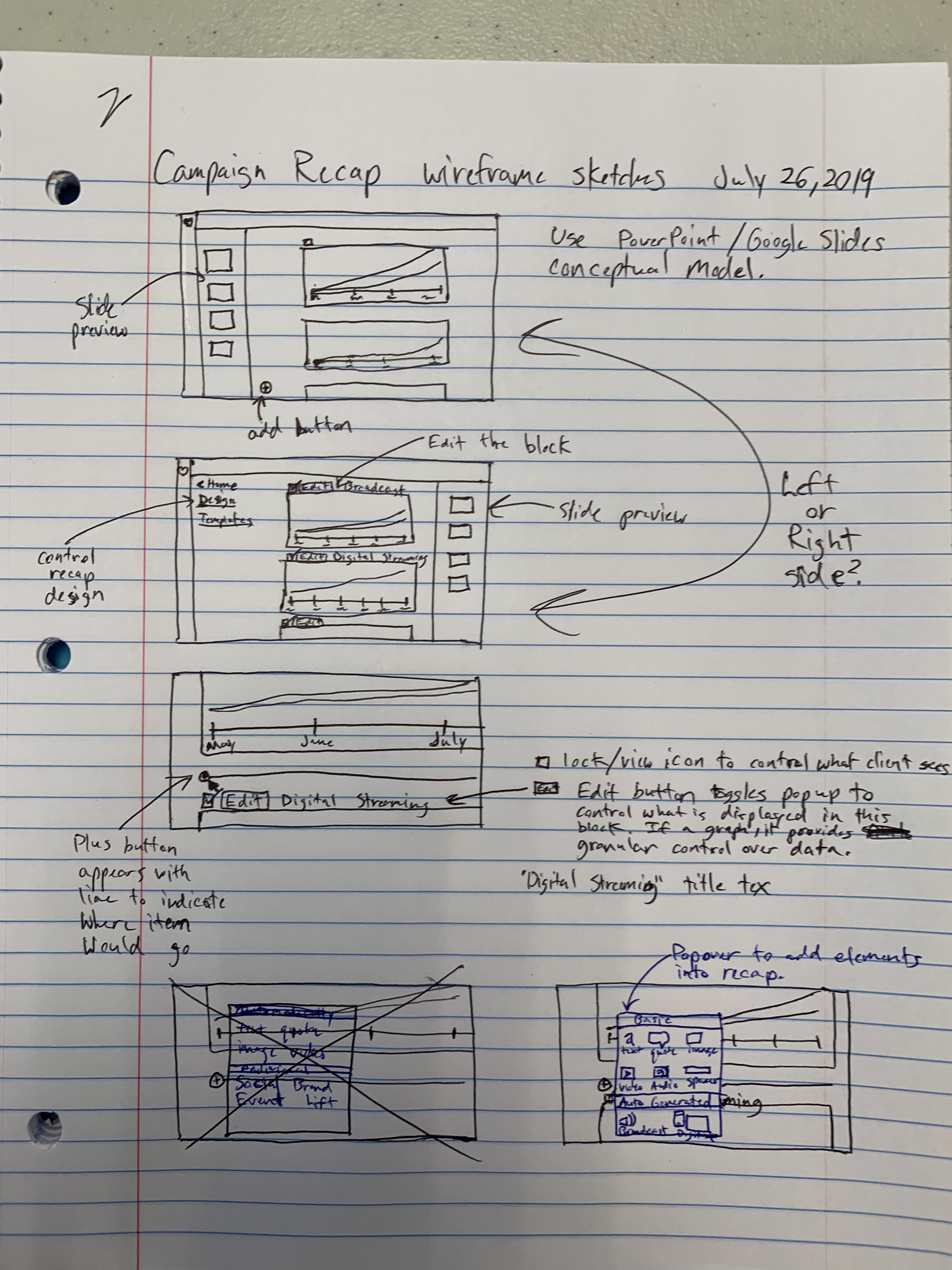

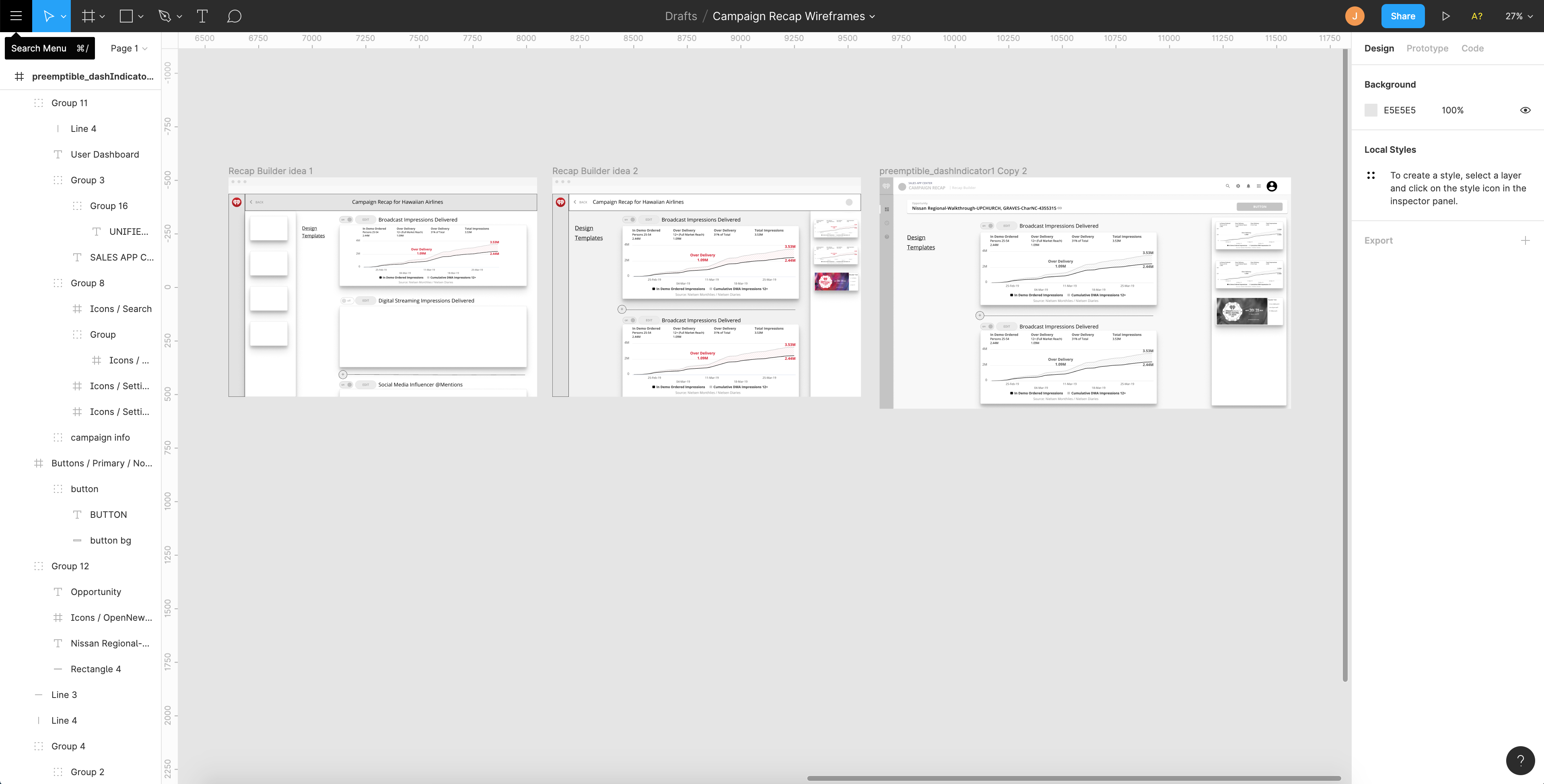

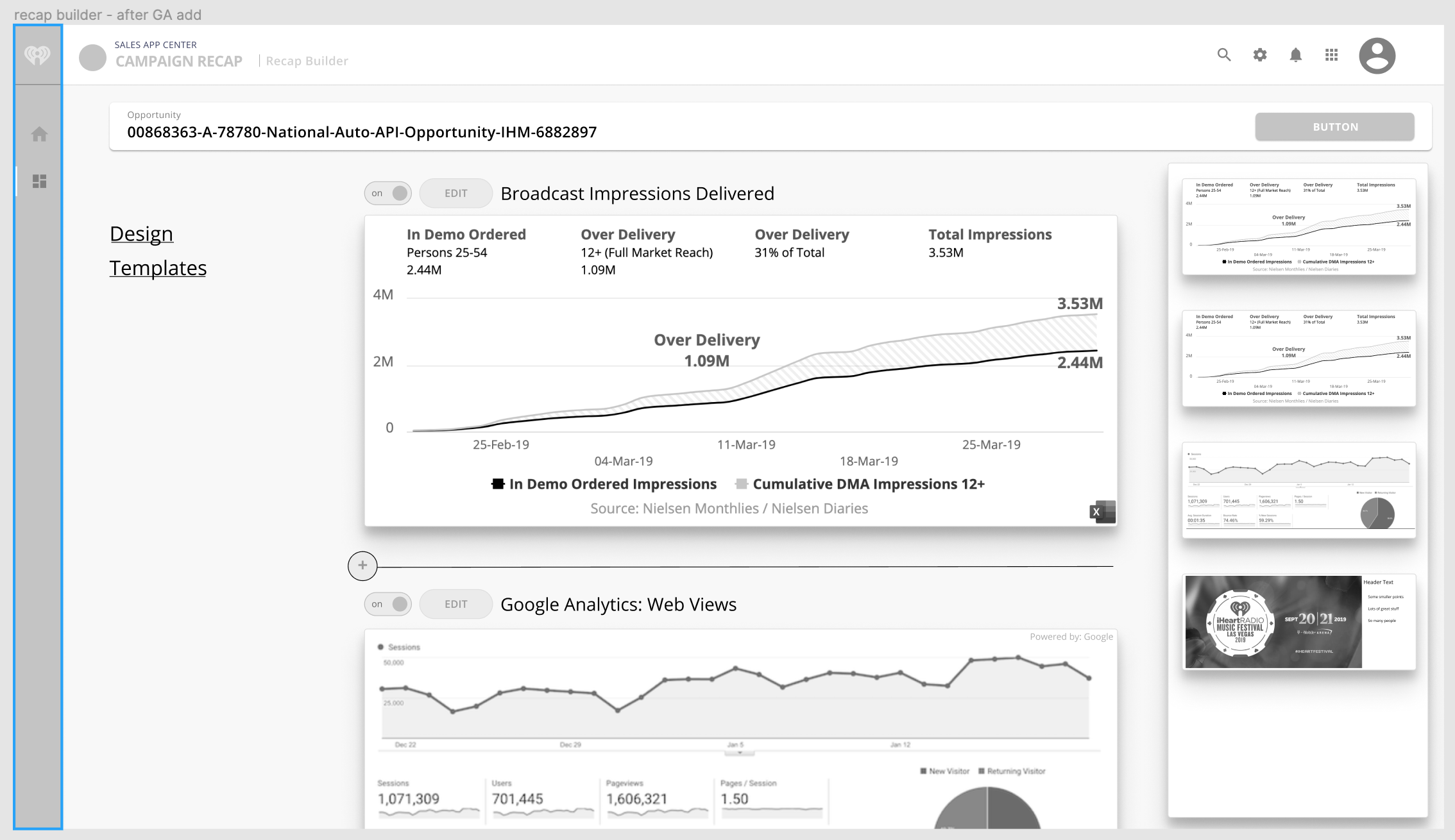

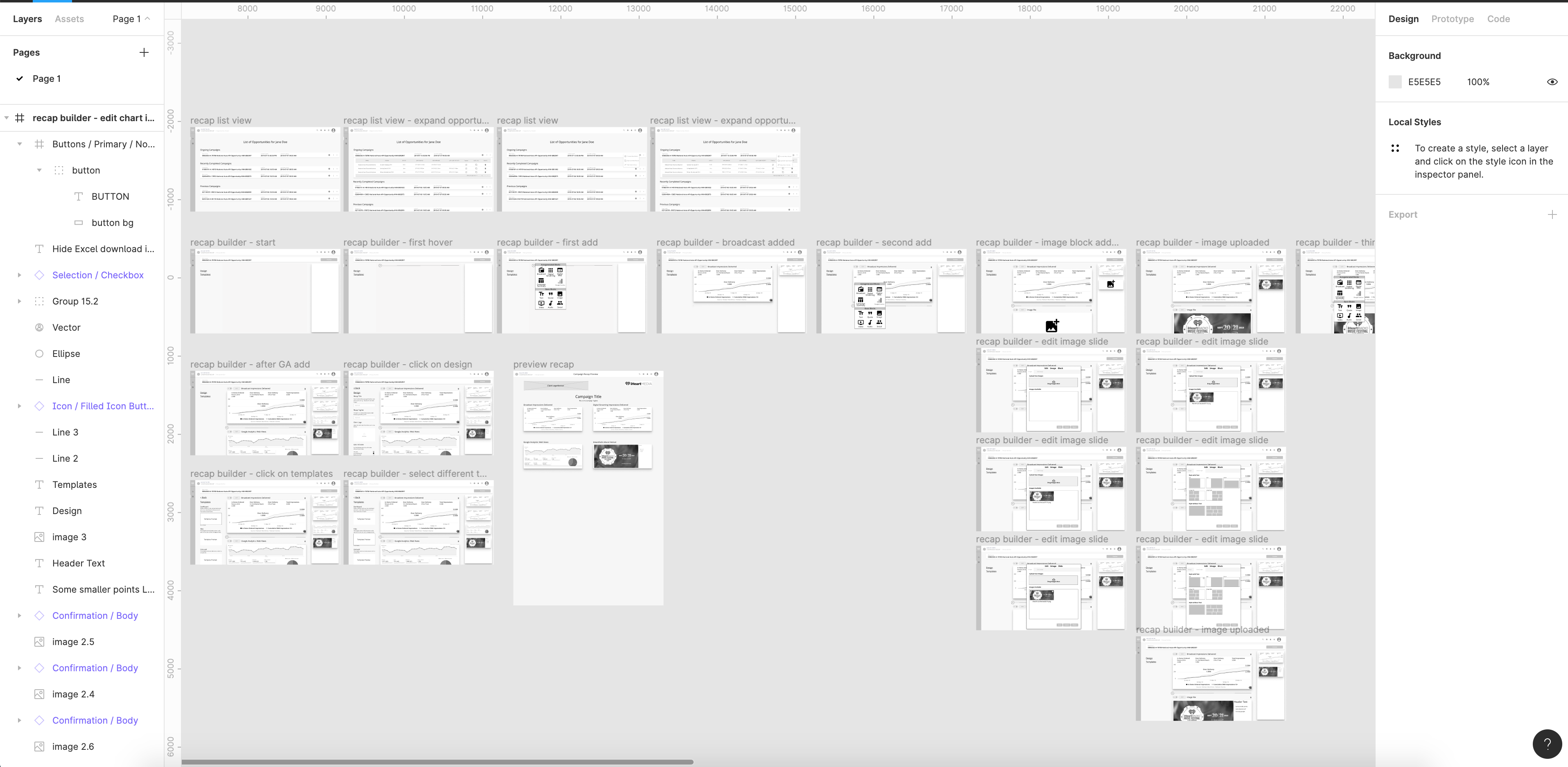

iHeart Media - Campaign Recap (2019)

Helping salespeople provide a better final recap to advertisers.

Featuring: personas, journey maps, service blueprints,

whiteboarding, sketches, and wireframes.

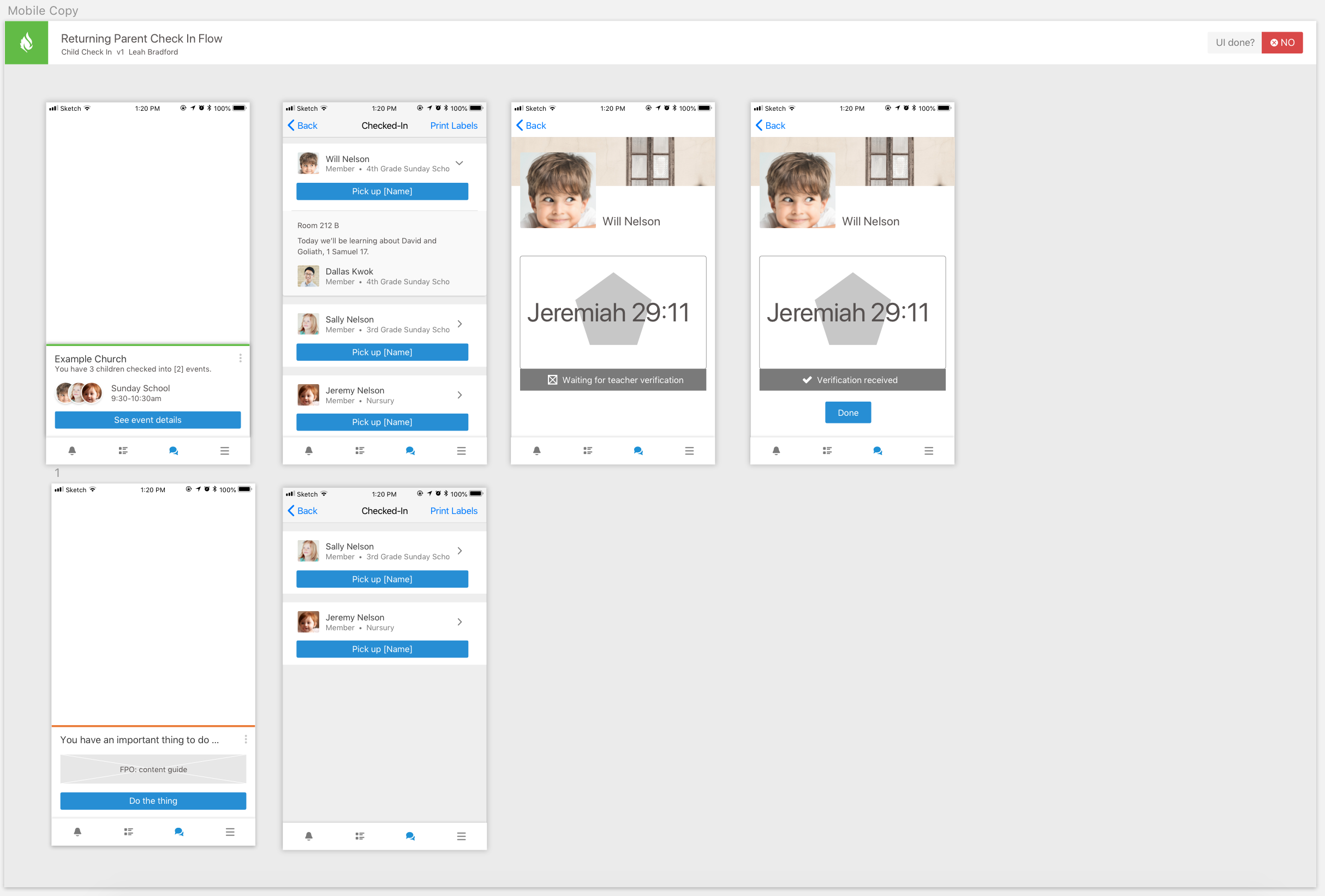

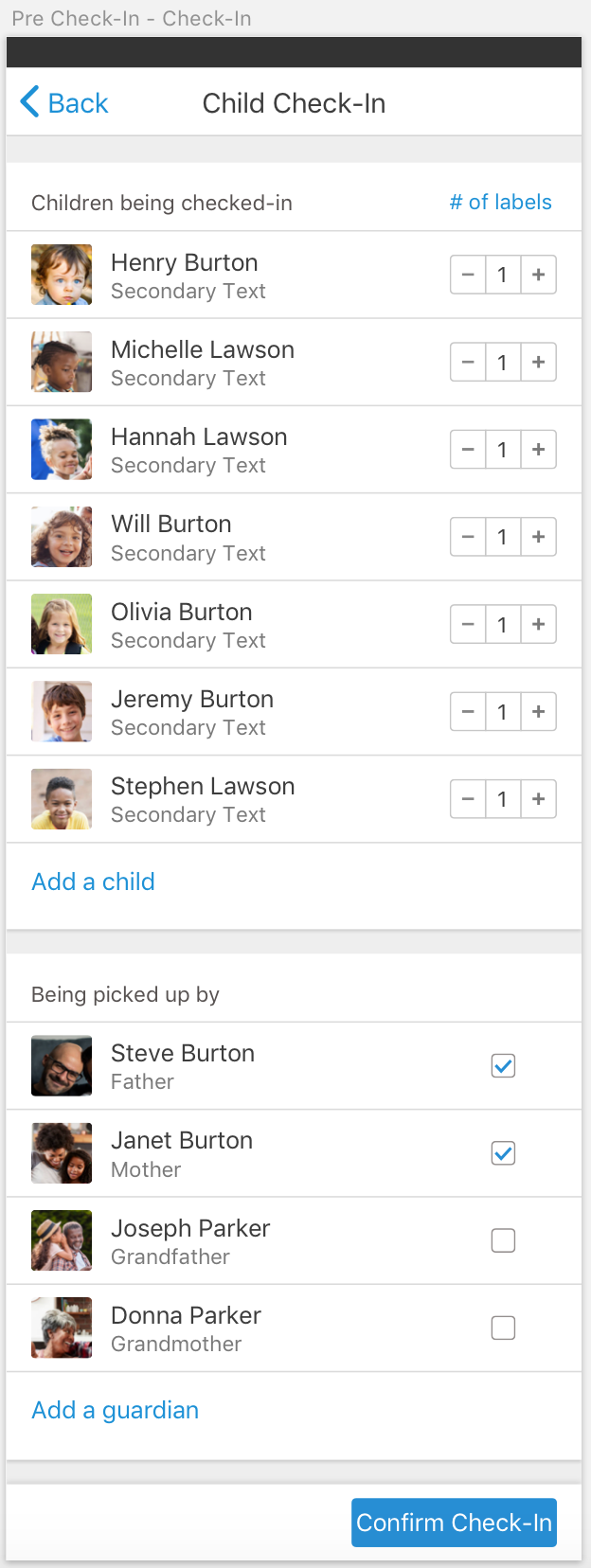

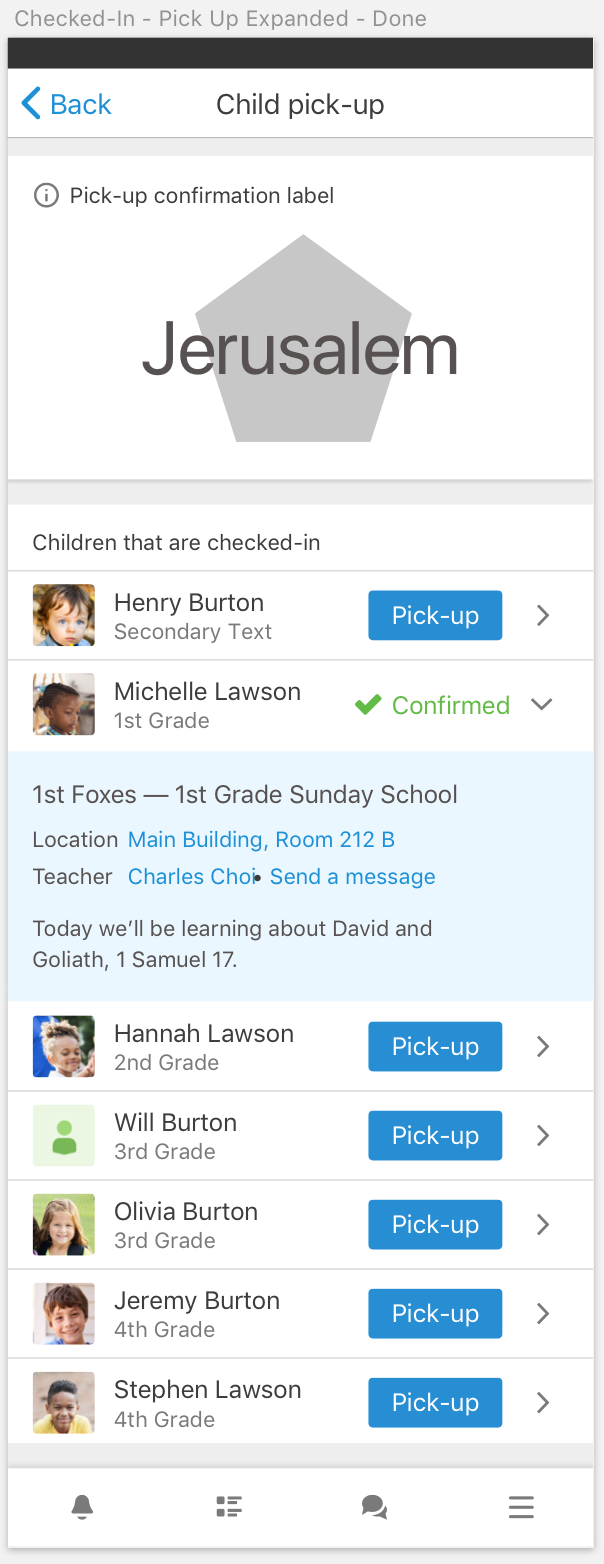

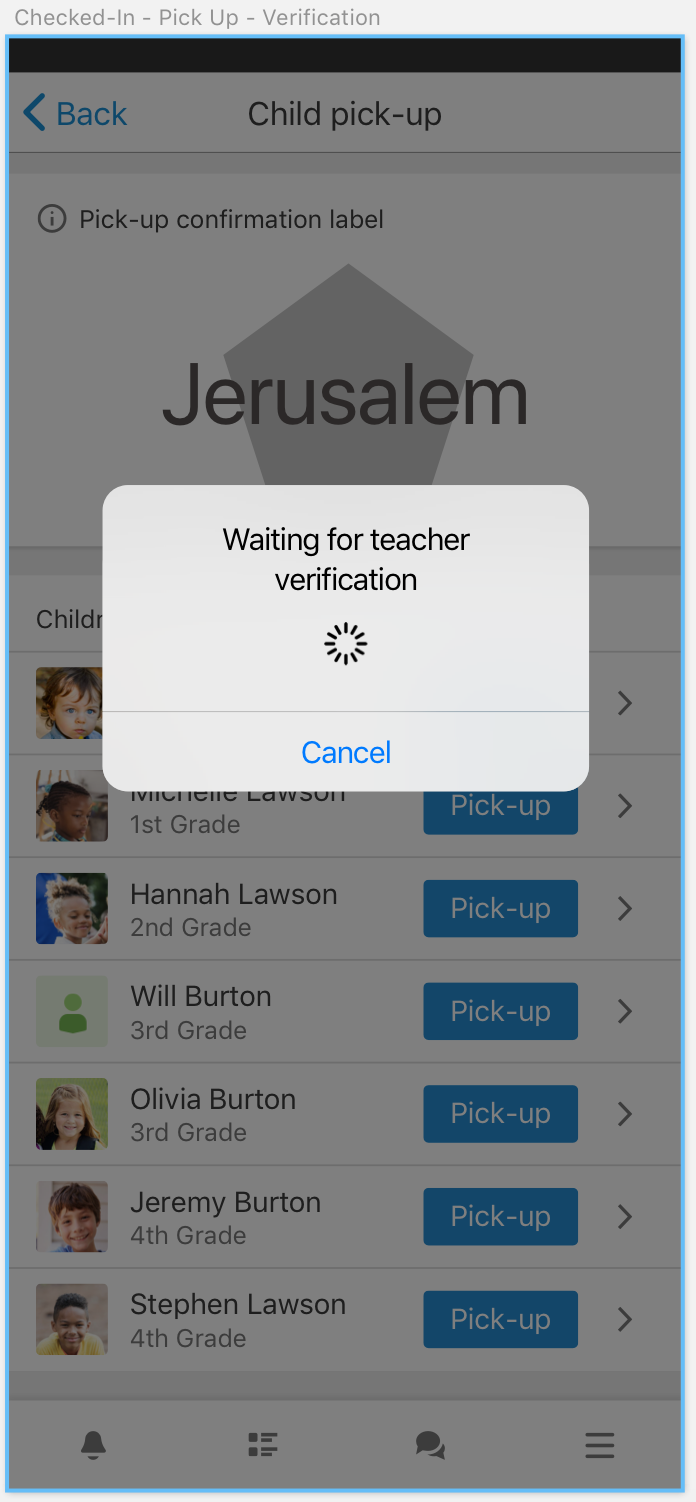

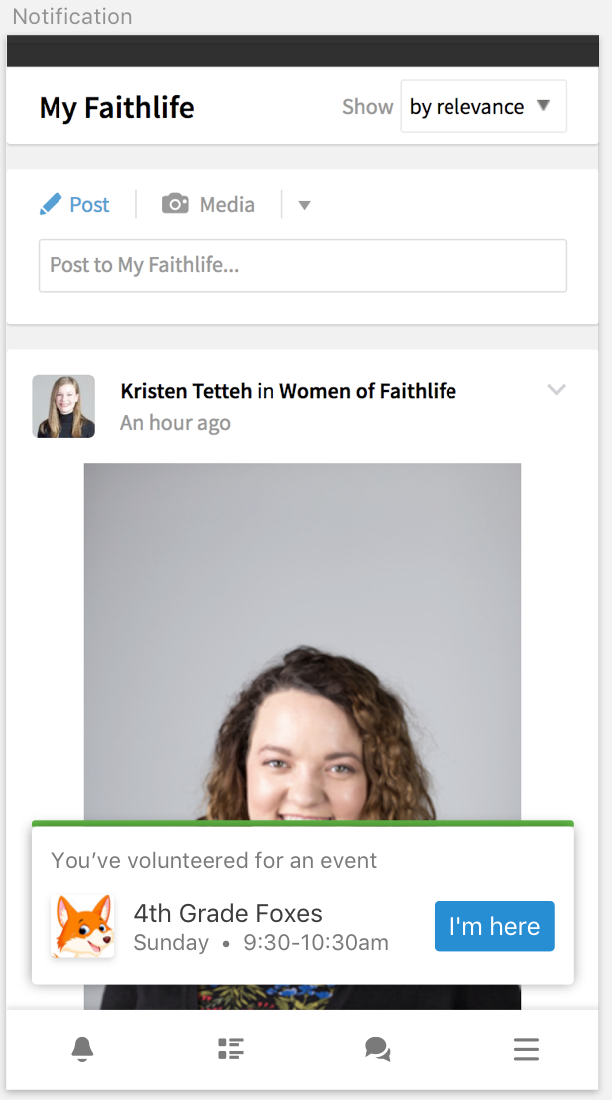

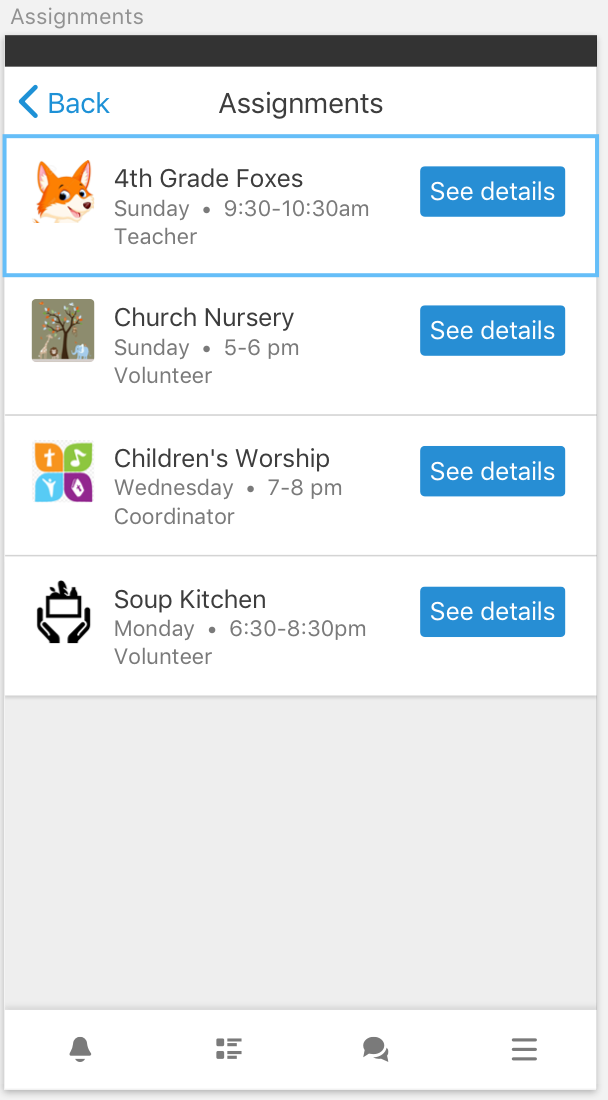

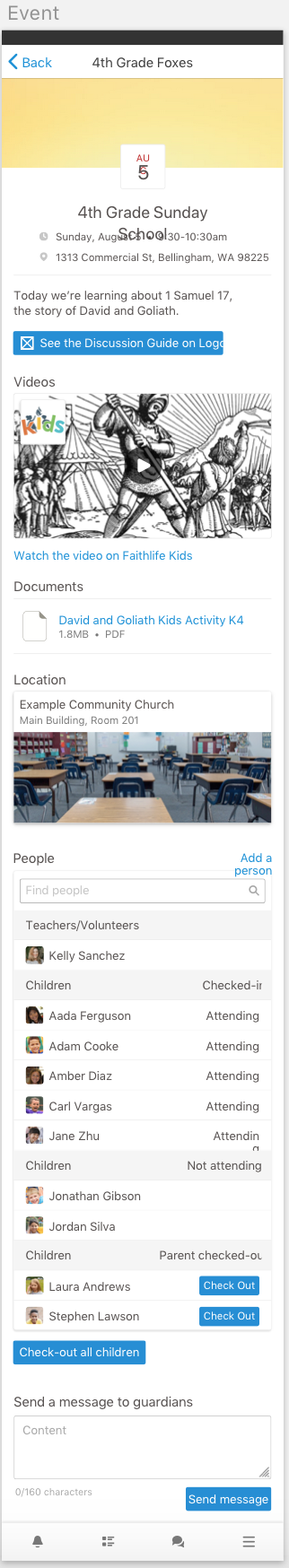

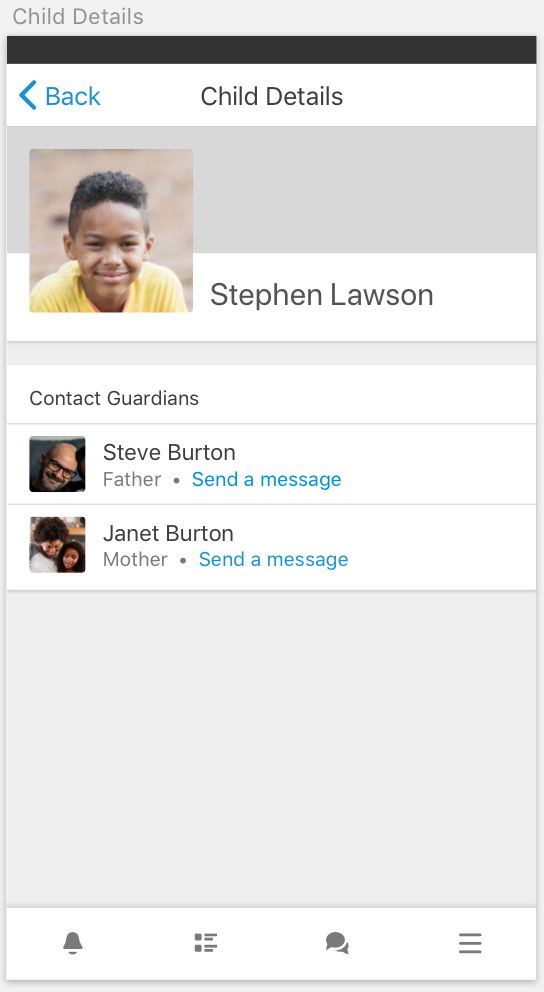

Faithlife - Child Check-In (2018)

Including parents in the spiritual development of their children

while keeping the kids safe.

Featuring: competitive analysis, interviews, experience

flows, flow charts, whiteboarding, lo-fidelity and hi-fidelity mocks.

Experience

Current Positions:

Abilene Christian University (11 Years)

I teach three courses per semester while I am actively involved in serving the university, mentoring students, working on my personal research, and overseeing student research. I started in the Fall of 2013 as an instructor and in the Fall of 2018 I moved up to Assistant Professor after completing my Ph.D. I was promoted to Associate Professor in 2022.

Google (Summer 2024)

Subject matter expert on generative AI for the open source Computer Science curriculum by Google.

iHeart Media (5 Years)

I started as a Senior UX Researcher (2 years) and was then asked to lead the team through multiple transitions as Director of UX (2 years). Finally, I stepped back into the research work that I enjoy so much as a Principal UX Researcher (current). When people ask why I enjoy working for iHeart, the answer is simple: the problems are fun to solve and the people are fantastic.

Previous Experience:

Faithlife

I spent the summer of 2018 as a UX researcher for Faithlife, helping lead problem and solutions discovery, design, and user testing for a brand new product. See the card about "Child Check-in" above to see my portfolio artifacts generated from this job.

Testimonials:"10/10 would hire full time." - Leah Bradford, Faithlife UX Designer

Eastern European Mission

Created beautiful front-end experiences, led users and stakeholders in design discovery sessions, ethnography with missionaries and major donors.

Halff Associates

Developed web and desktop applications to support the work of civil engineers using C, C#, and PHP. Halff is a regional full service engineering firm based in Dallas, TX.

Education

Ph.D. in Computer Science

Master of Arts in Old Testament

Master of Divinity

Master of Science in Applied Cognition and Neuroscience

Bachelor of Science in Computer Science

Peer Reviewed Articles & Research

I conduct research on a variety of topics. As a human-computer interaction researcher, I'm interested in how humans use technology, which can take place in many different contexts. Right now I'm working on novice programmer interaction with large language models, metacognition in novice programmers, human factors and design of programming error messages, and usable privacy and security. I am also the sponsor and research mentor for the SIGCHI Local Chapter at ACU, which conducts research that I oversee.

My Research

2024

James Prather, Brent N. Reeves, Juho Leinonen, Stephen MacNeil, Arisoa S. Randrianasolo, Brett A. Becker, Bailey Kimmel, Jared Wright, Ben Briggs

Proceedings of the 2024 Conference on International Computing Education Research (ICER '24)

Peer-reviewed publication with 20% acceptance rate

Evanfiya Logacheva, Arto Hellas, James Prather, Sami Sarsa, Juho Leinonen

Proceedings of the 2024 Conference on International Computing Education Research (ICER '24)

Peer-reviewed publication with 20% acceptance rate

Paul Denny, David Smith, Max Fowler, James Prather, Brett Becker and Juho Leinonen

Proceedings of the Innovation and Technology in Computer Science Education conference (ITiCSE ’24)

Peer-reviewed publication with 27% acceptance rate

Lauren Margulieux, James Prather, Brent Reeves, Brett Becker, Gozde Cetin Uzun, Dastyni Loksa, Juho Leinonen and Paul Denny

Proceedings of the Innovation and Technology in Computer Science Education conference (ITiCSE ’24)

Peer-reviewed publication with 27% acceptance rate

Erica Kleinman, Reza Habibi, Garrett Powell, Brent N. Reeves, James Prather, Magy Seif El-Nasr

Proceedings of the 2024 ACM Conference on Human Factors in Computing Systems (CHI'24)

Peer-reviewed publication with 26.3% acceptance rate

Paul Denny, Juho Leinonen, James Prather, Andrew Luxton-Reilly, Thezyrie Amarouche, Brett Becker, Brent Reeves

Proceedings of the 2024 ACM Technical Symposium on Computer Science Education (SIGCSE '24)

Peer-reviewed publication with 33% acceptance rate

Seth Poulsen, Sami Sarsa, James Prather, Juho Leinonen, Brett Becker, Arto Hellas, Paul Denny, Brent Reeves

Proceedings of the 2024 ACM Technical Symposium on Computer Science Education (SIGCSE '24)

Peer-reviewed publication with 33% acceptance rate

Paul Denny, James Prather, Brett Becker, James Finnie-Ansley, Arto Hellas, Juho Leinonen, Andrew Luxton-Reilly, Brent Reeves, Eddie Antonio Santos, and Sami Sarsa

Communications of the ACM (CACM)

Contributed Article (peer-reviewed journal article)

James Prather, Brent Reeves, Sami Sarsa, Paul Denny, Brett Becker, Juho Leinonen, Andrew Luxton-Reilly, Garrett Powell, James Finnie-Ansley, and Eddie Antonio Santos

ACM Transactions on Computer-Human Interaction (TOCHI), Vol 31, Iss. 1

Peer-reviewed journal

2023

James Prather, Paul Denny, Juho Leinonen, Brett A. Becker, Ibrahim Albluwi, Michelle Craig, Hieke Keuning, Natalie Kiesler, Tobias Kohn, Andrew Luxton-Reilly, Stephen MacNeil, Andrew Peterson, Raymond Pettit, Brent N. Reeves, Jaromir Savelka

Proceedings of the Innovation and Technology in Computer Science Education conference (ITiCSE ’23)

Peer-reviewed publication

Brent Reeves, Sami Sarsa, James Prather, Paul Denny, Brett Becker, Arto Hellas, Bailey Kimmel, Garrett Powell and Juho Leinonen

Proceedings of the Innovation and Technology in Computer Science Education conference (ITiCSE ’23)

Peer-reviewed publication with 27% acceptance rate

Brett Becker, James Prather, Paul Denny, Andrew Luxton-Reilly, James Finnie-Ansley, and Eddie Antonio Santos

Proceedings of the 2023 ACM Technical Symposium on Computer Science Education (SIGCSE '23)

Peer-reviewed publication with 29% acceptance rate

- Best Paper Award

Juho Leinonen, Brett Becker, Paul Denny, Arto Hellas, James Prather, Brent Reeves, and Sami Sarsa

Proceedings of the 2023 ACM Technical Symposium on Computer Science Education (SIGCSE '23)

Peer-reviewed publication with 29% acceptance rate

James Prather, Paul Denny, Brett Becker, Arisoa Randrianasolo, Robert Nix, Garrett Powell, and Brent Reeves

Proceedings of the 2023 ACM Technical Symposium on Computer Science Education (SIGCSE '23)

Peer-reviewed publication with 29% acceptance rate

James Finnie-Ansley, Paul Denny, Andrew Luxton-Reilly, Eddie Antonio Santos, James Prather, and Brett Becker

Proceedings of the Australasian Computing Education Conference (ACE '23)

Peer-reviewed publication with 43% acceptance rate

- Best Paper Award

2022

Barbara Ericson, Paul Denny, James Prather, Rodrigo Duran, Arto Hellas, Juho Leinonen, Craig Miller, Briana Morrison, Jan Pearce and Susan Rodger

Proceedings of the Innovation and Technology in Computer Science Education conference (ITiCSE ’22)

Peer-reviewed publication

James Prather, Lauren Margulieux, Jacqueline Whalley, Paul Denny, Brent Reeves, Brett Becker, Paramvir Singh, Garrett Powell, and Nigel Bosch

Proceedings of the 2022 Conference on International Computing Education Research (ICER '22)

Peer-reviewed publication with 14% acceptance rate

James Prather, John Homer, Paul Denny, Brett Becker, John Marsden and Garrett Powell

Proceedings of the 2022 World Conference on Computers in Education (WCCE '22)

Peer-reviewed publication

Dastyni Loksa, Lauren Margulieux, Brett A. Becker, Michelle Craig, Paul Denny, Raymond Pettit, and James Prather

ACM Transactions on Computing Education (TOCE)

Peer-reviewed journal

Brett A. Becker, Paul Denny, Daniel Gallagher, James Prather, Colleen Gostomski, Kelli Norris, and Garrett Powell

Proceedings of the 2022 ACM Technical Symposium on Computer Science Education (SIGCSE '22)

Peer-reviewed publication with 29% acceptance rate

Paul Denny, Brett A. Becker, Nigel Bosch, James Prather, Brent Reeves, and Jacquelin Whalley

Proceedings of the 2022 ACM Technical Symposium on Computer Science Education (SIGCSE '22)

Peer-reviewed publication with 29% acceptance rate

James Finnie-Ansley, Paul Denny, Brett Becker, Andrew Luxton-Reilly, and James Prather

Proceedings of the 2022 ACM Australasian Computing Education Research Conference (ACE '22)

Peer-reviewed publication with 38% acceptance rate

- Best Paper Award

2021

Paul Denny, James Prather, Brett A. Becker, Catherine Mooney, John Homer, Zachary Albrecht, Garrett Powell

Proceedings of the 2021 ACM Conference on Human Factors in Computing Systems (CHI'21)

Peer-reviewed publication with 26.3% acceptance rate

Brett A. Becker, Paul Denny, James Prather, Raymond Pettit, Robert Nix, Catherine Mooney

Proceedings of the 2021 ACM Australasian Computing Education Research Conference (ACE '21)

Peer-reviewed publication with 35% acceptance rate

2020

James Prather, Brett A. Becker, Michelle Craig, Paul Denny, Dastyni Loksa, Lauren Margulieux

Proceedings of the 2020 ACM Conference on International Computing Education Research (ICER '20)

Peer-reviewed publication with 22% acceptance rate

- Best Paper Award

Paul Denny, James Prather, Brett A. Becker

Proceedings of the Innovation and Technology in Computer Science Education conference (ITiCSE ’20)

Peer-reviewed publication with 28% acceptance rate

- Best Paper Finalist

2019

Brett A. Becker, Paul Denny, Raymond Pettit, Durell Bouchard, Dennis J. Bouvier, Brian Harrington, Amir Kamil,

Amey Karkare, Chris McDonald, Peter-Michael Osera, Janice L. Pearce, and James Prather.

Proceedings of the Innovation and Technology in Computer Science Education conference (ITiCSE ’19)

Peer-reviewed publication

Paul Denny, James Prather, Brett Becker, Zachary Albrecht, Dastyni Loksa and Raymond Pettit

Proceedings of the 19th Koli Calling International Conference on Computing Education Research (Koli Calling '19)

Peer-reviewed publication with 37% acceptance rate

Brett A. Becker, Paul Denny, Raymond Pettit, Durell Bouchard, Dennis J. Bouvier, Brian Harrington, Amir Kamil,

Amey Karkare, Chris McDonald, Peter-Michael Osera, Janice L. Pearce, and James Prather.

Proceedings of the Innovation and Technology in Computer Science Education conference (ITiCSE ’19)

Peer-reviewed publication

James Prather, Raymond Pettit, Brett Becker, Paul Denny, Dastyni Loksa, Alani Peters, Zachary Albrecht, and Krista Masci

ACM Inroads 10 (2), 42-49

Re-print in official ACM magazine

James Prather, Raymond Pettit, Brett Becker, Paul Denny, Dastyni Loksa, Alani Peters, Zachary Albrecht, and Krista Masci

Proceedings of the 2019 ACM Technical Symposium on Computer Science Education (SIGCSE)

Peer-reviewed publication with 32% acceptance rate.

- 1st Place Best Paper Award

2018

James Prather, Raymond Pettit, Kayla McMurry, Alani Peters, John Homer, and Maxine Cohen

Proceedings of the 2018 ACM Conference on International Computing Education Research (ICER)

Peer-reviewed publication with 22% acceptance rate.

James Prather

Doctoral dissertation

Committee Members: Maxine Cohen (chair), Raymond Pettit, and Michael Laszlo

2017

James Prather, Raymond Pettit, Kayla McMurry, Alani Peters, John Homer, Nevan Simone, and Maxine Cohen

Proceedings of the 2017 ACM Conference on International Computing Education Research (ICER)

Peer-reviewed publication with 27% acceptance rate.

James Prather, Robert Nix, Ryan Jessup

15th Annual Workshop on Network and Systems Support for Games (NetGames) in cooperation with ACM SIGMM and ACM SIGCOMM

Peer-reviewed publication with 33% acceptance rate.

Raymond Pettit and James Prather

Journal of Computing Sciences in Colleges 32(4)

Peer-reviewed publication.

Student Research from My Lab

The following papers were written by undergraduate students that I mentored or from the SIGCHI local chapter that I sponsor.

Bailey Kimmel, Austin Geisert, Lily Yaro, Brendan Gipson, Taylor Hotchkiss, Sidney Osae-Asante, Hunter Vaught, Grant Wininger, Chase Yamaguchi

Extended Abstracts of the 2022 CHI Conference on Human Factors in Computing Systems (CHI '24)

Peer-reviewed publication with 38% acceptance rate

Davis Arnold, Benjamin Blackmon, Brendan Gipson, Anthony Moncivais, Garrett Powell, Megan Skeen, Michael Thorson, Nathan Wade

Extended Abstracts of the 2022 CHI Conference on Human Factors in Computing Systems (CHI '22)

Peer-reviewed publication with 50% acceptance rate

- 3rd Place Student Research Competition

Adam Garcia, Garrett Powell, Davis Arnold, Luis Ibarra, Matthew Pietrucha, Michael Thorson, Abigail Verhelle, Nathan Wade, Samantha Webb

Extended Abstracts of the 2021 CHI Conference on Human Factors in Computing Systems (CHI '21)

Peer-reviewed publication with 50% acceptance rate

John Marsden, Zachary Albrecht, Paula Berggren, Jessica Halbert, Kyle Lemons, Anthony Moncivais, Matthew Thompson

Extended Abstracts of the 2020 CHI Conference on Human Factors in Computing Systems (CHI '20)

Peer-reviewed publication with 29% acceptance rate

John Marsden

Proceedings of the 51st ACM Technical Symposium on Computer Science Education (SIGCSE '20)

Peer-reviewed publication

Collin Blanchard, Holly Buff, Travis Cook, Raquel Dottle, Gideon Luck, Alani Peters, Virginia Pettit, Isaak Ramirez, and Jessica Wininger

Extended Abstracts of the 2018 CHI Conference on Human Factors in Computing Systems (CHI '18)

Peer-reviewed publication with 50% acceptance rate

Kayla Holcomb McMurry, Nevan Simone

Proceedings of the 47th ACM Technical Symposium on Computer Science Education (SIGCSE '16)

Peer-reviewed publication

- 1st Place Student Research Competition

Monographs

Interests

I'm a husband and father of four kids, so when I'm not at work, I'm usually hanging out with my family, going on adventures with them, or travelling. I love travelling. My wife and I were study abroad sponsors to Oxford during the summer of 2017 (I kept a daily travel blog if you'd like to see what that was like). I've travelled internationally to attend conferences and present research. I'm also a committed Christian who believes in living the words of Jesus ("love your neighbor as yourself"), the words of the prophets ("do not oppress the widow, the orphan, the alien, or the poor"), and the words of Paul ("do nothing from selfish ambition or conceit, but in humility regard others as better than yourselves"). I think Christianity calls for inclusion and Jesus models that behavior. I enjoy a good theological discussion. I also enjoy combining my two interests, Computer Science and religion, into work in Digital Humanities and am part of a three-year grant project to perform data analysis on ancient Ethiopic manuscripts of the Old Testament.

In the rare moment that I have downtime, I enjoy playing video games with my students (mostly Blizzard's Overwatch), playing D&D with friends, reading a good fantasy/steampunk/sci-fi novel, or writing my own novel (yeah, everyone is writing a novel).

Awards

Grants:

- Google AIR Grant: $60,000

Research:

- Best Paper Award, SIGCSE, 2023 (link)

- Best Paper Award, ACE, 2023 (link)

- Best Paper Award, ACE, 2022 (link)

- Best Paper Award, ICER, 2020 (link)

- Best Paper Finalist, ITiCSE, 2020 (link)

- Best Paper Award, SIGCSE, 2019 (link)

University:

- Dean's Award for Research, College of Business Administration & Technology, 2023

- Outstanding Junior Faculty (Weathers Fellowship), College of Business Administration & Technology, 2021

- Teacher of the Year, School of Information Technology and Computing, 2020

- Undergraduate Research Mentor of the Year (STEM), ACU Office of Research and Sponsored Programs, 2018

- Teacher of the Year, School of Information Technology and Computing, 2017

- Teacher of the Year, School of Information Technology and Computing, 2015

- Exceptional Leadership and Dedication Award, 2013

A superb educator

Damn fine researcher

-Josh M., Sr. UX Designer @ iHeartMedia

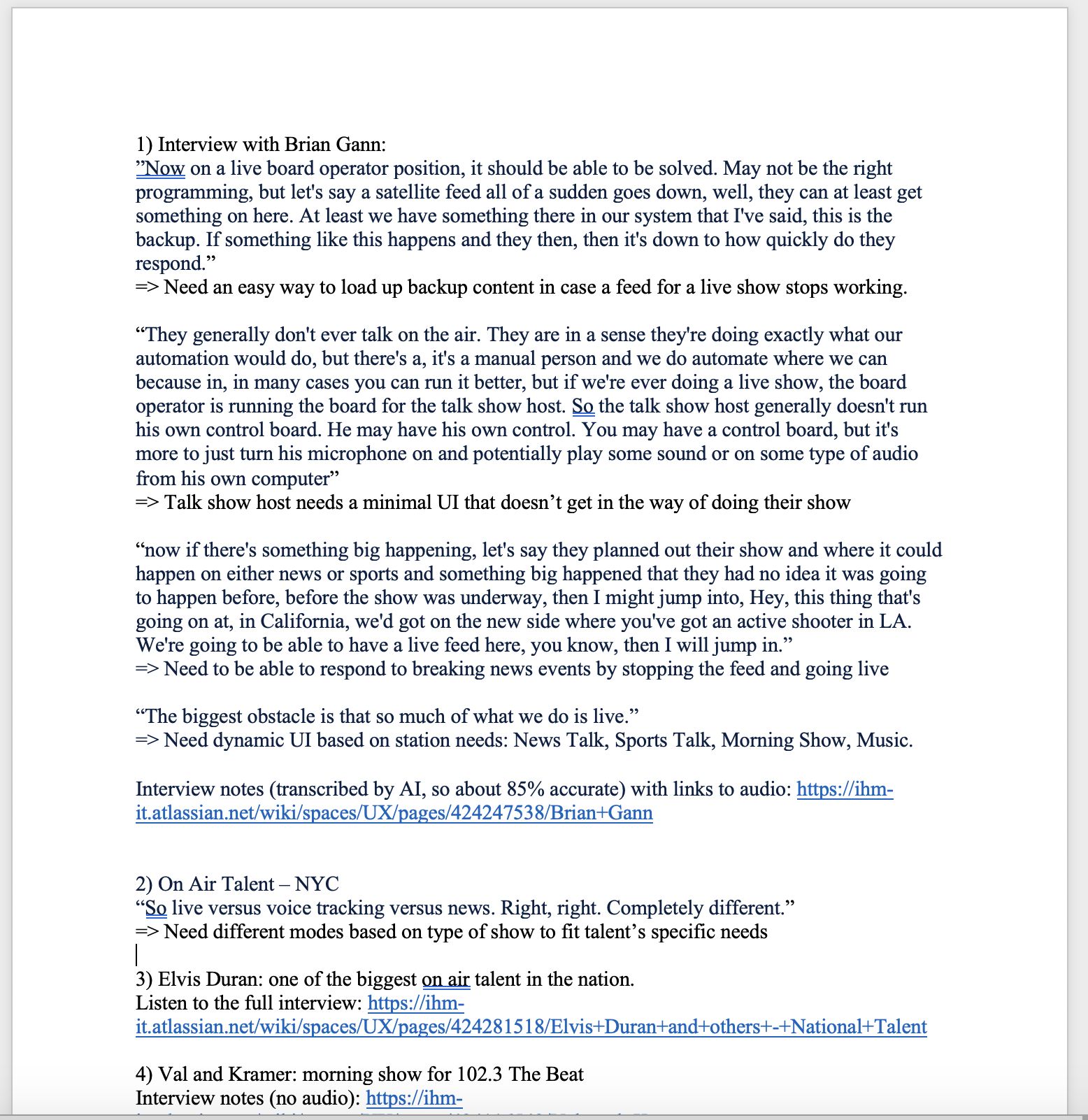

I had previously done quite a bit of research on live shows, but for a different portal that would be used by production directors. I was therefore asked at the outset of this project to go back through old user interviews that I had done and synthesize what we know about live shows into a one-pager for management consumption.

I had previously done quite a bit of research on live shows, but for a different portal that would be used by production directors. I was therefore asked at the outset of this project to go back through old user interviews that I had done and synthesize what we know about live shows into a one-pager for management consumption.

I then began interviewing selected top talent from across the nation in focus groups to get a better understanding of how talent do their jobs, what it's like to run a live radio show, how they use the current software, and what the new cloud-native software needs to be able to do to support their work.

I then began interviewing selected top talent from across the nation in focus groups to get a better understanding of how talent do their jobs, what it's like to run a live radio show, how they use the current software, and what the new cloud-native software needs to be able to do to support their work.

The design sprint was a total of four days: three days in a row, then a week to prototype, and a final day for the finale and validation. Each day we had iHeart's top talent on the call and participating in the design exercises. Buy-in from stakeholders is key in design and this design sprint was perfect for getting it. All of our talent were excited to be helping create the software they would be using in the future.

The design sprint was a total of four days: three days in a row, then a week to prototype, and a final day for the finale and validation. Each day we had iHeart's top talent on the call and participating in the design exercises. Buy-in from stakeholders is key in design and this design sprint was perfect for getting it. All of our talent were excited to be helping create the software they would be using in the future.

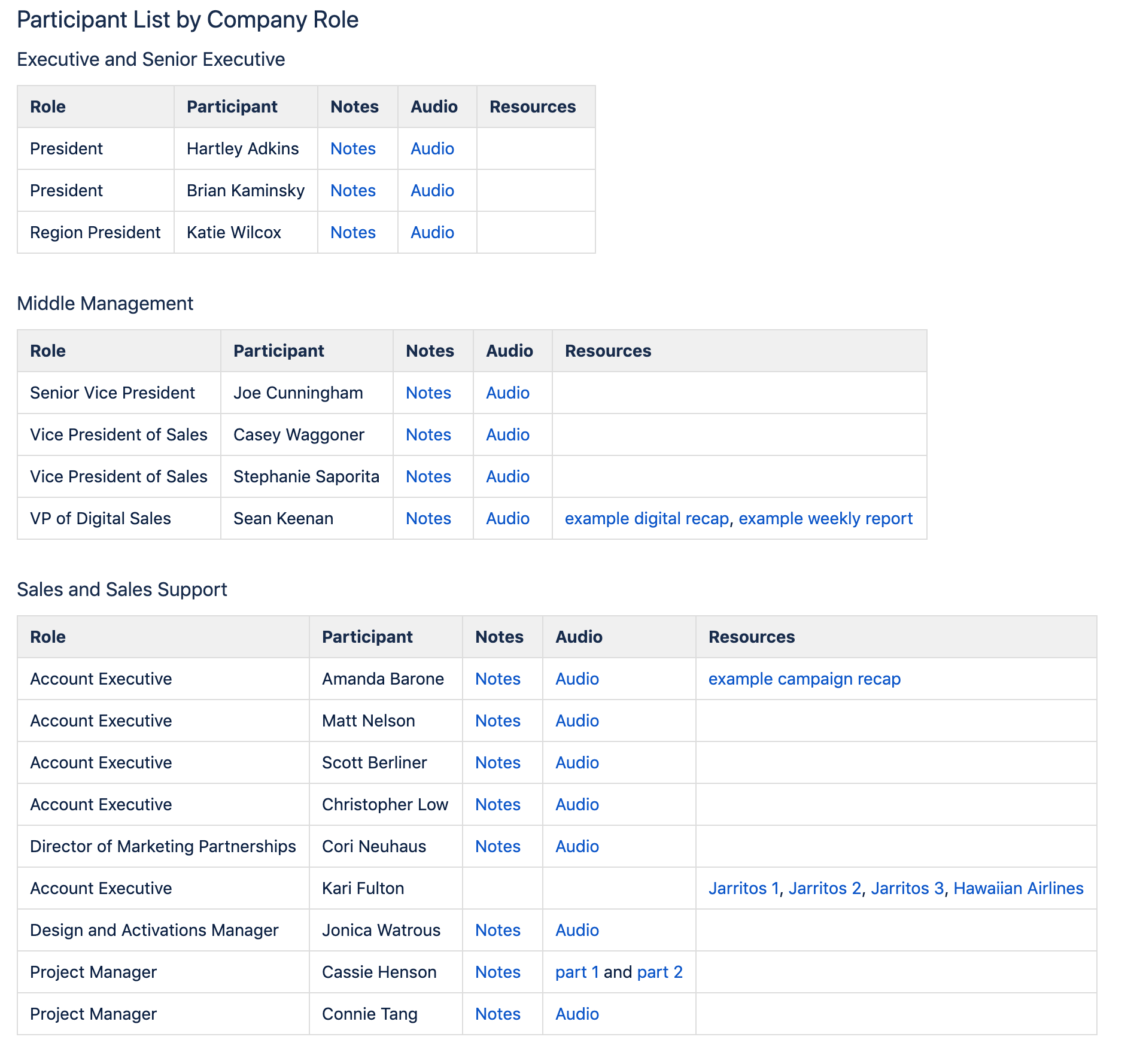

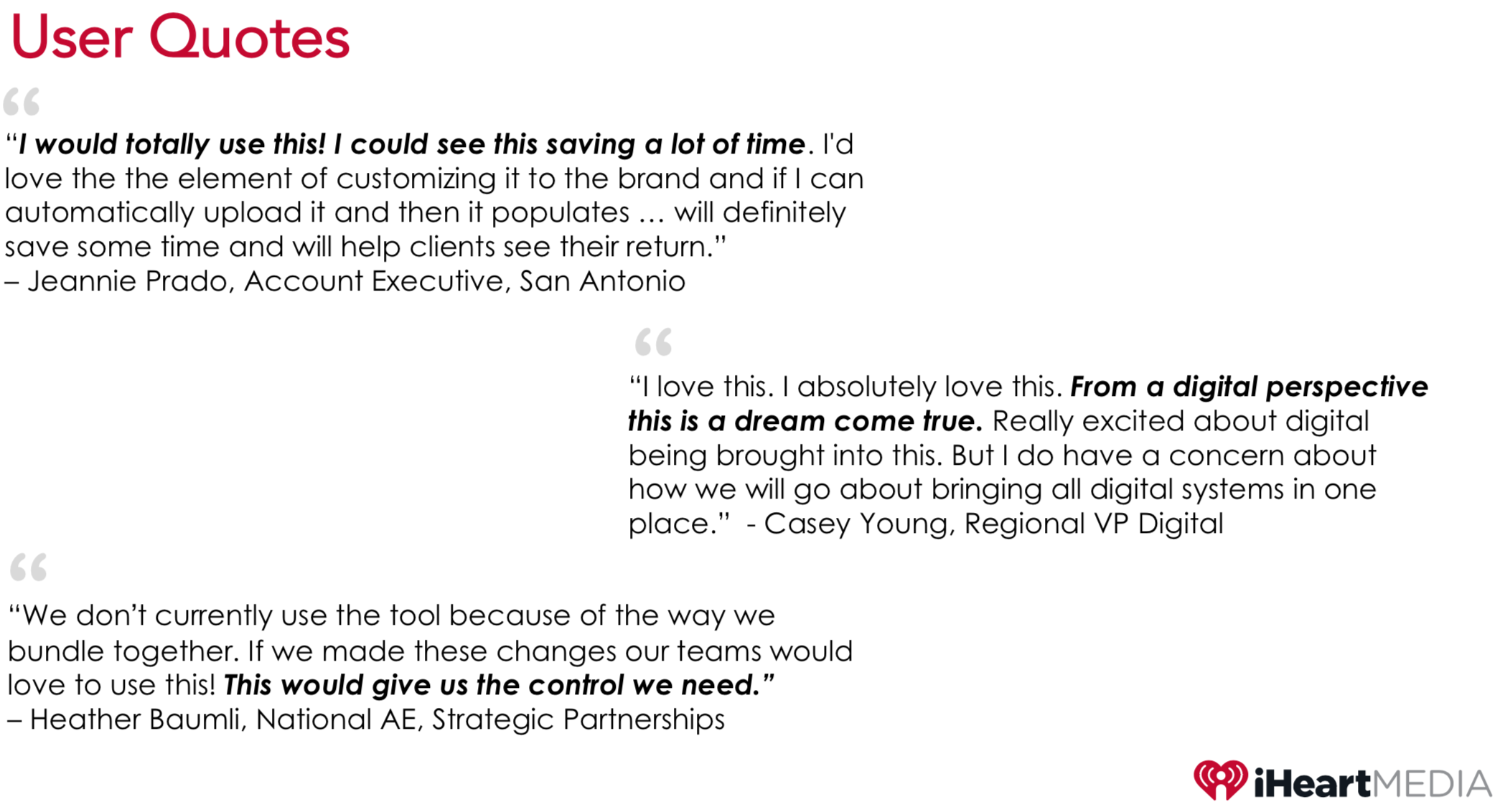

After just a week on the job, iHeartMedia flew me to New York City for a week to conduct user interviews with business stakeholders. The following week they flew me to San Francisco. Finally, I returned to corporate HQ in San Antonio to conduct more interviews the following week.

After just a week on the job, iHeartMedia flew me to New York City for a week to conduct user interviews with business stakeholders. The following week they flew me to San Francisco. Finally, I returned to corporate HQ in San Antonio to conduct more interviews the following week.

I interviewed people at every level of the company from C-level executives to sales support staff, totaling 17 interviews. For the sales staff, these conversations were in pursuit of answering our research questions outlined above. For the executives, the conversations were to understand the vision of where the company was headed, trends in media, and to get some measure of buy-in from the top.

I interviewed people at every level of the company from C-level executives to sales support staff, totaling 17 interviews. For the sales staff, these conversations were in pursuit of answering our research questions outlined above. For the executives, the conversations were to understand the vision of where the company was headed, trends in media, and to get some measure of buy-in from the top.

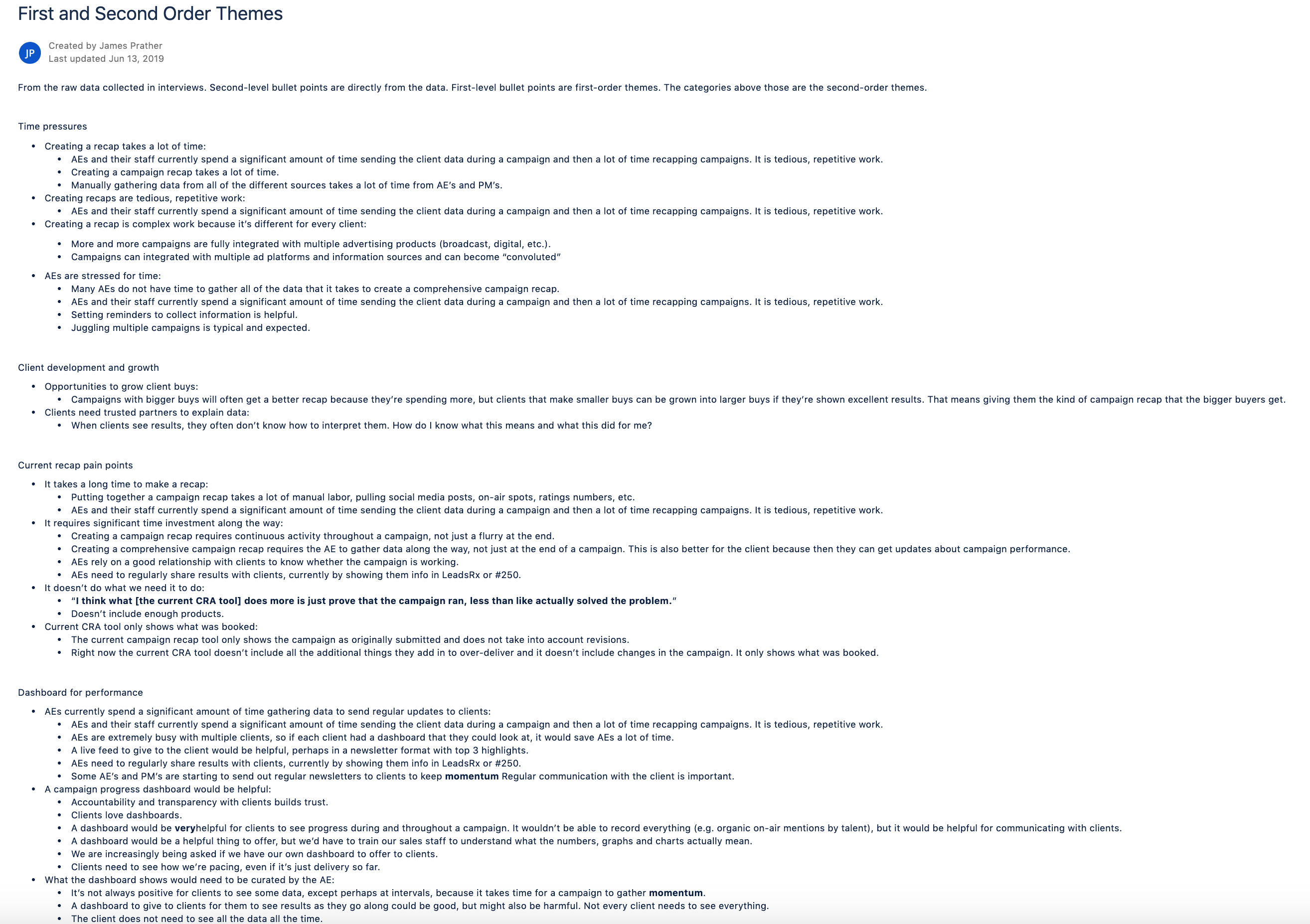

Each interivew was carefully transcribed and the salient highlights pulled out for analysis of trends. After this was completed for all the interivews, I produced first and second order themes so we could establish patterns in the data, understand our users, and move towards a user experience redesign.

Each interivew was carefully transcribed and the salient highlights pulled out for analysis of trends. After this was completed for all the interivews, I produced first and second order themes so we could establish patterns in the data, understand our users, and move towards a user experience redesign.

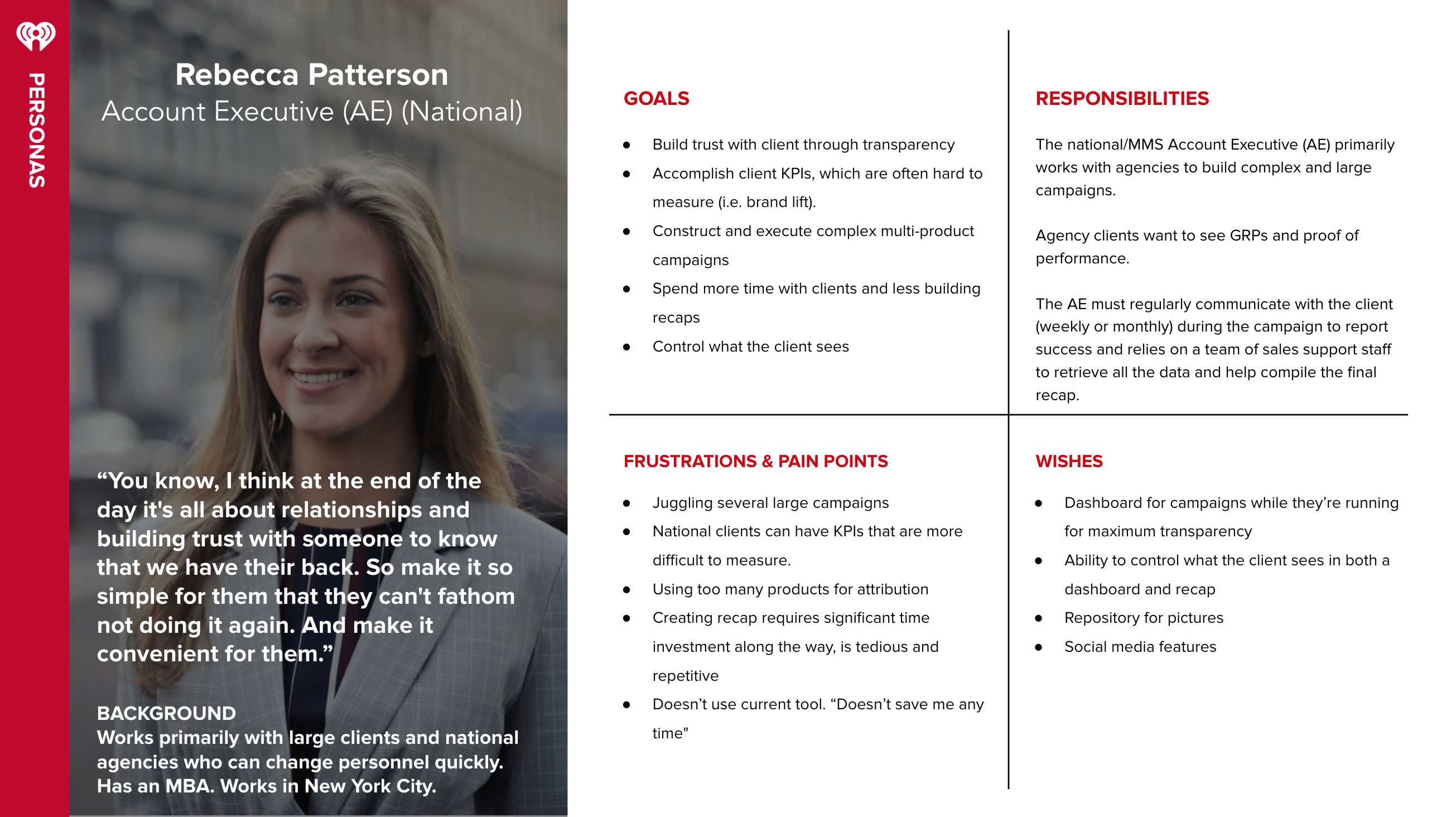

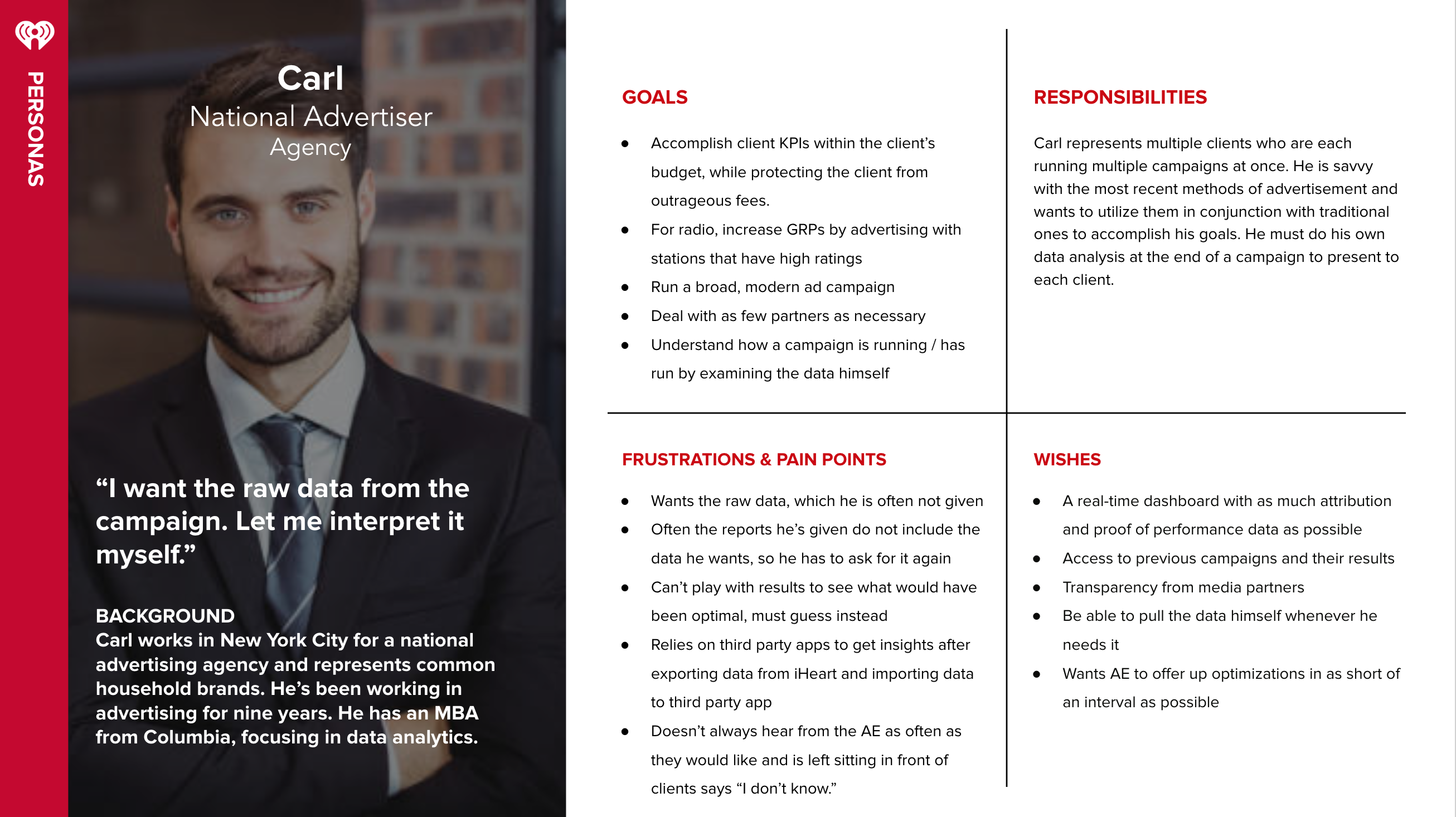

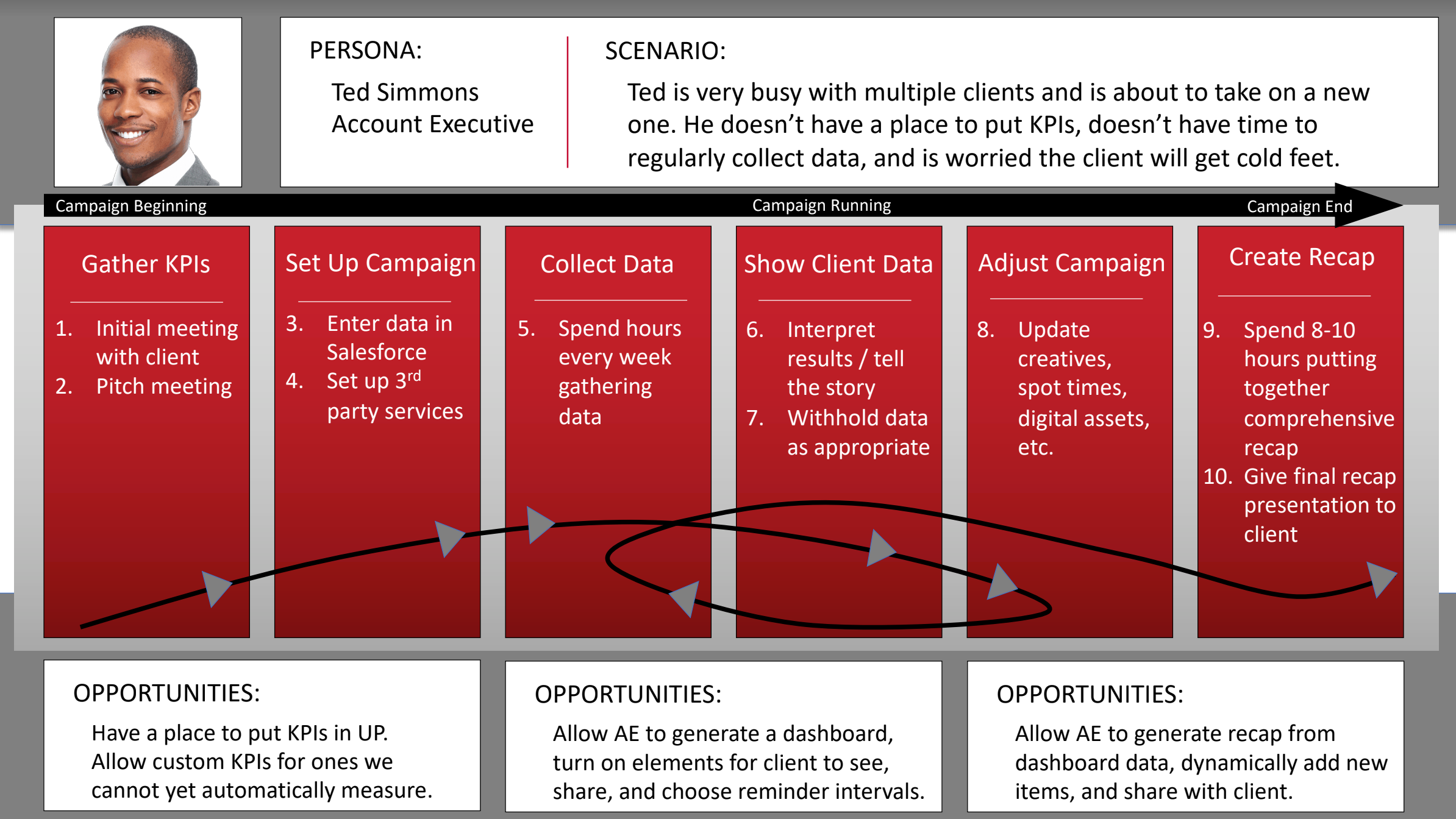

The next step was to create a better understanding of how the users currently conduct their work and the visualize the painpoints into a workflow so we could target those weaknesses in any future design work. Whatever app redesign we envisioned, it had to address user needs (personas) and their workflow (journey map and service blueprint).

The next step was to create a better understanding of how the users currently conduct their work and the visualize the painpoints into a workflow so we could target those weaknesses in any future design work. Whatever app redesign we envisioned, it had to address user needs (personas) and their workflow (journey map and service blueprint).

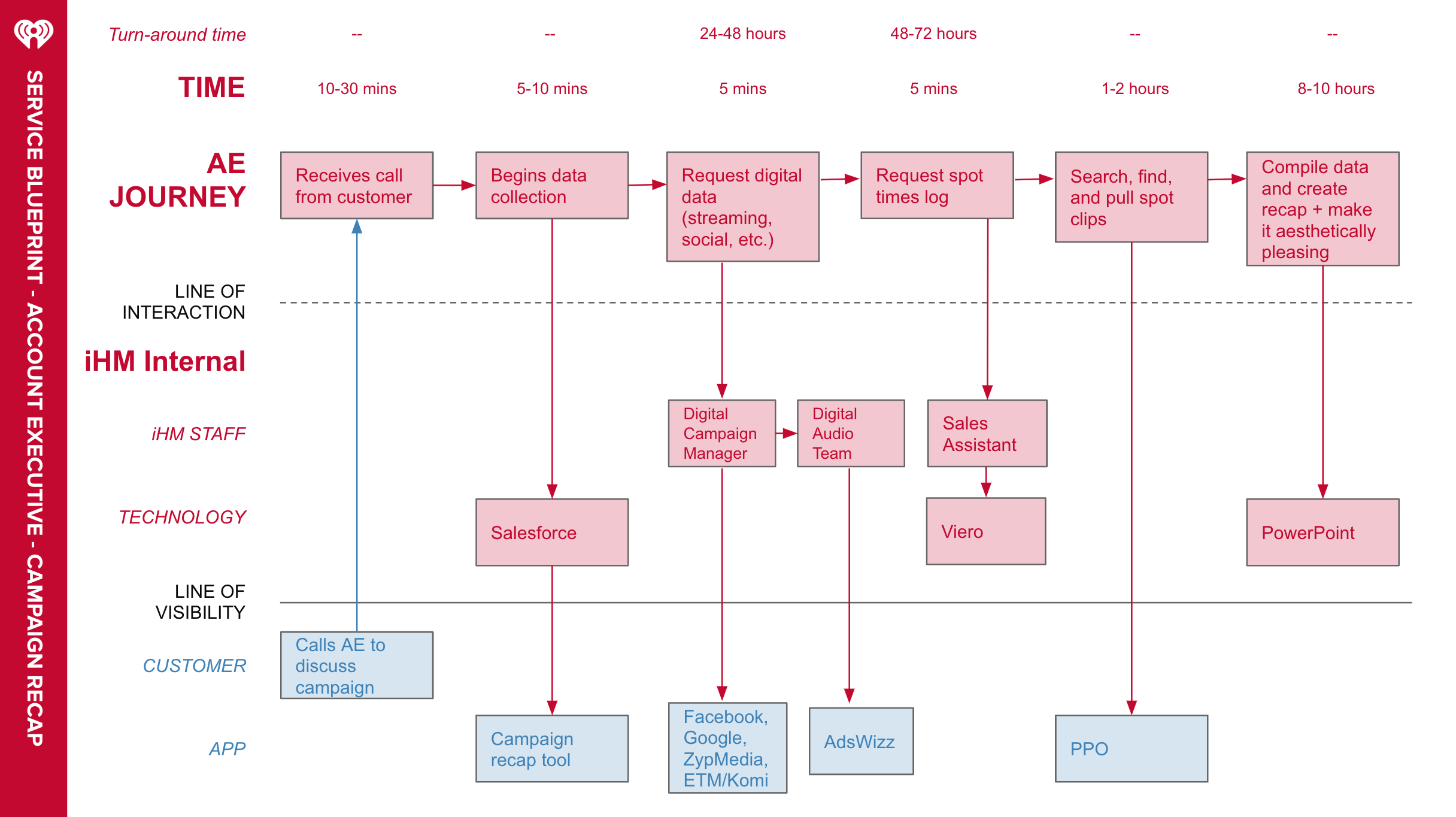

A service blueprint, like the one to the right, allowed us to see the AE's workflow, but also to see all the interactions they have with internal and external systems, other users, and see where in the behin-the-scenes process a roadblock can occur.

A service blueprint, like the one to the right, allowed us to see the AE's workflow, but also to see all the interactions they have with internal and external systems, other users, and see where in the behin-the-scenes process a roadblock can occur.

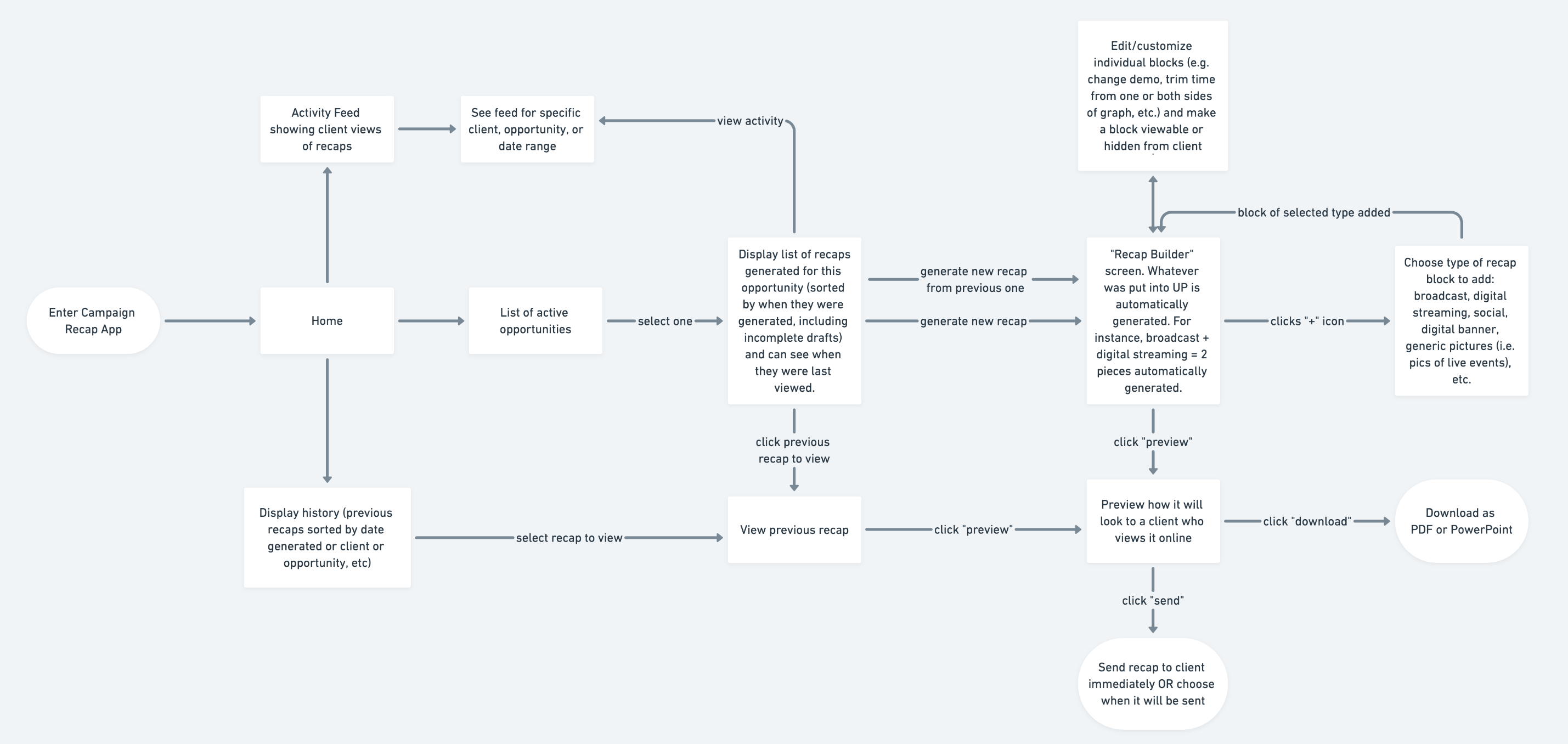

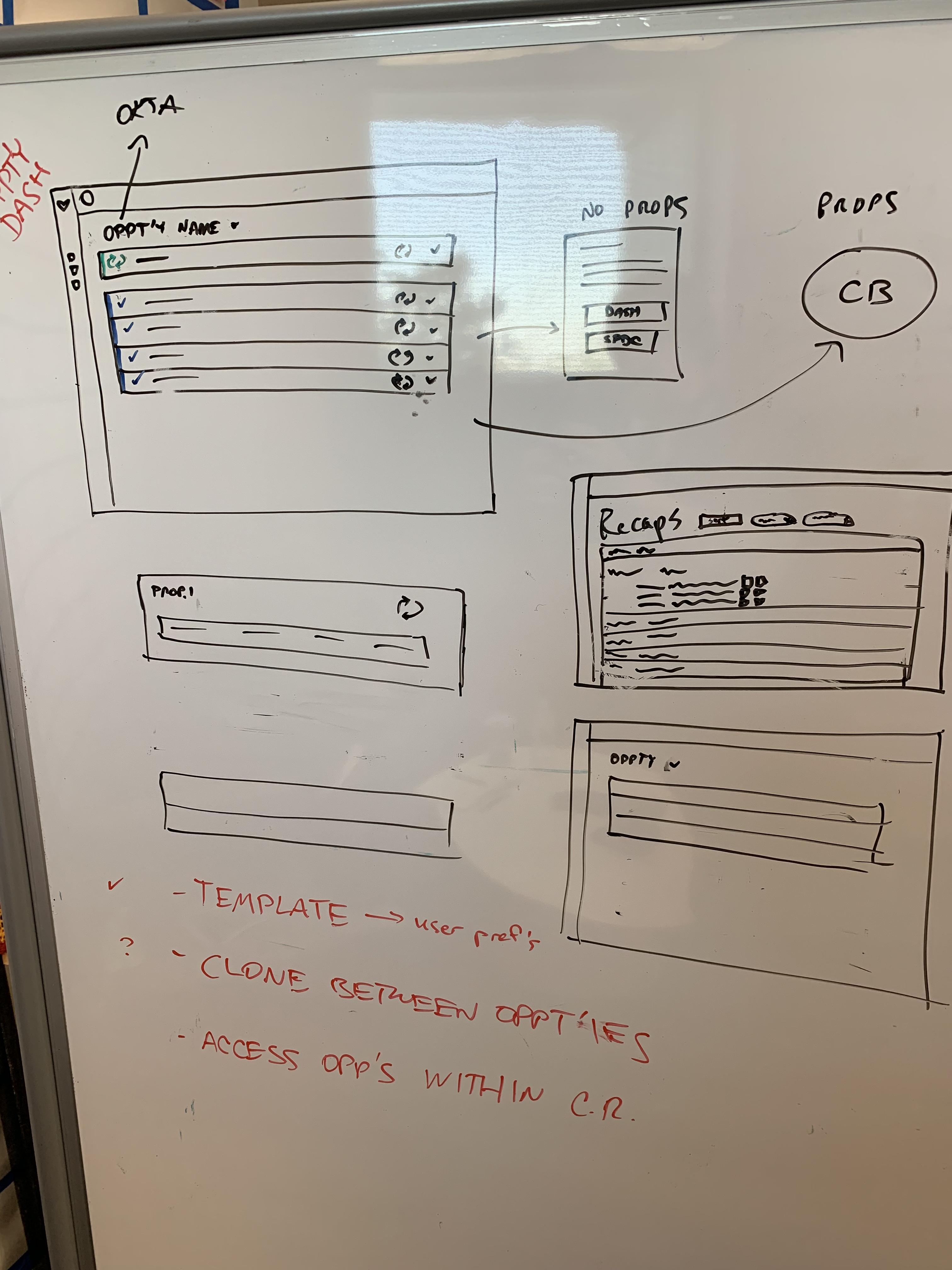

We started with abstract user flows. How should a user move through this redesigned app? What should their experience be? Visualizing this allowed us to minimize complexity while supporting the full experience desired by our account executives that we interviewed.

We started with abstract user flows. How should a user move through this redesigned app? What should their experience be? Visualizing this allowed us to minimize complexity while supporting the full experience desired by our account executives that we interviewed.

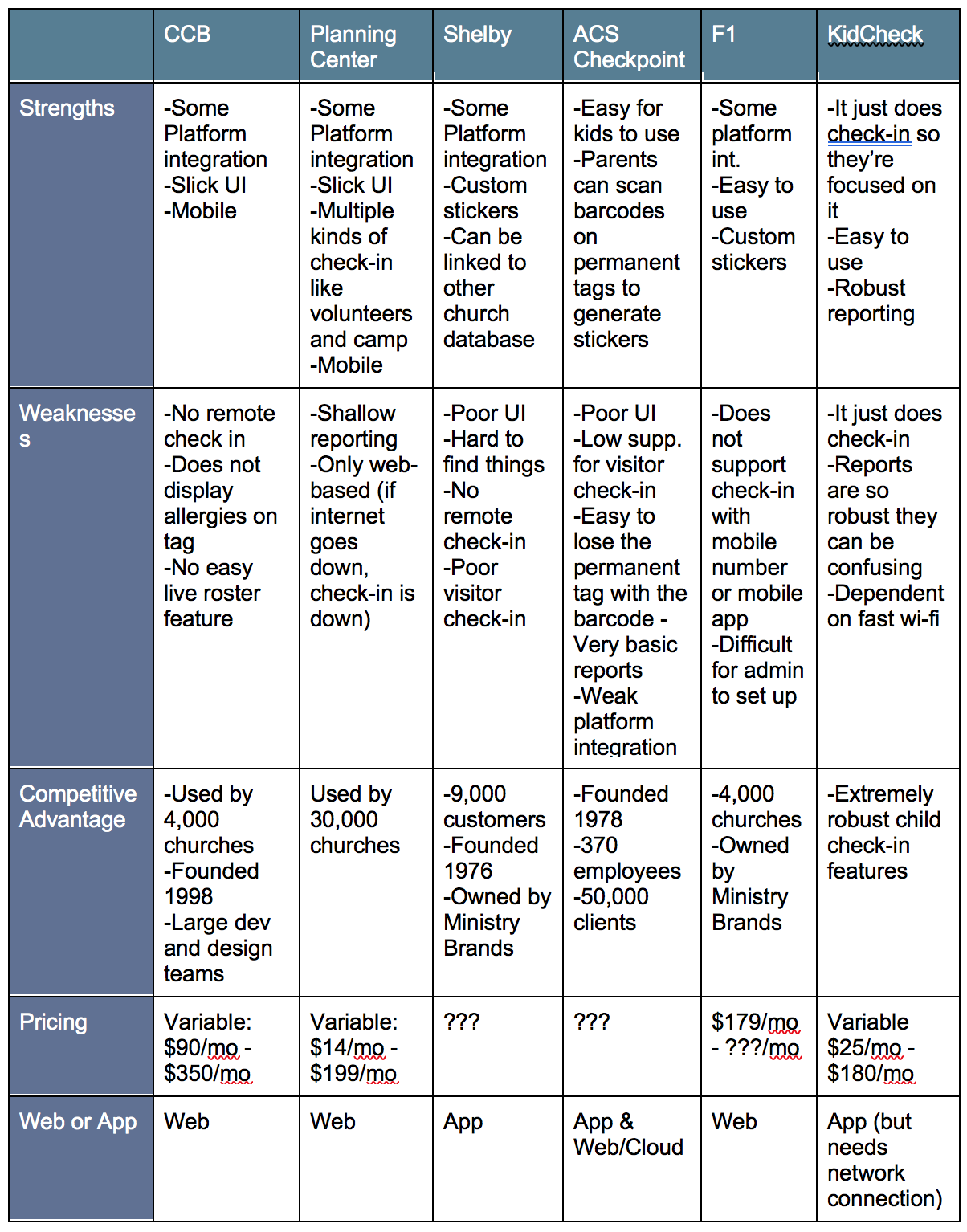

First, I performed a competitive analysis of what features our competitors were already offering to get a sense of the product space. I collected information from websites and marketing material from the seven most-popular child check-in solutions currently on the market. This was important to answer our first research question above.

First, I performed a competitive analysis of what features our competitors were already offering to get a sense of the product space. I collected information from websites and marketing material from the seven most-popular child check-in solutions currently on the market. This was important to answer our first research question above.

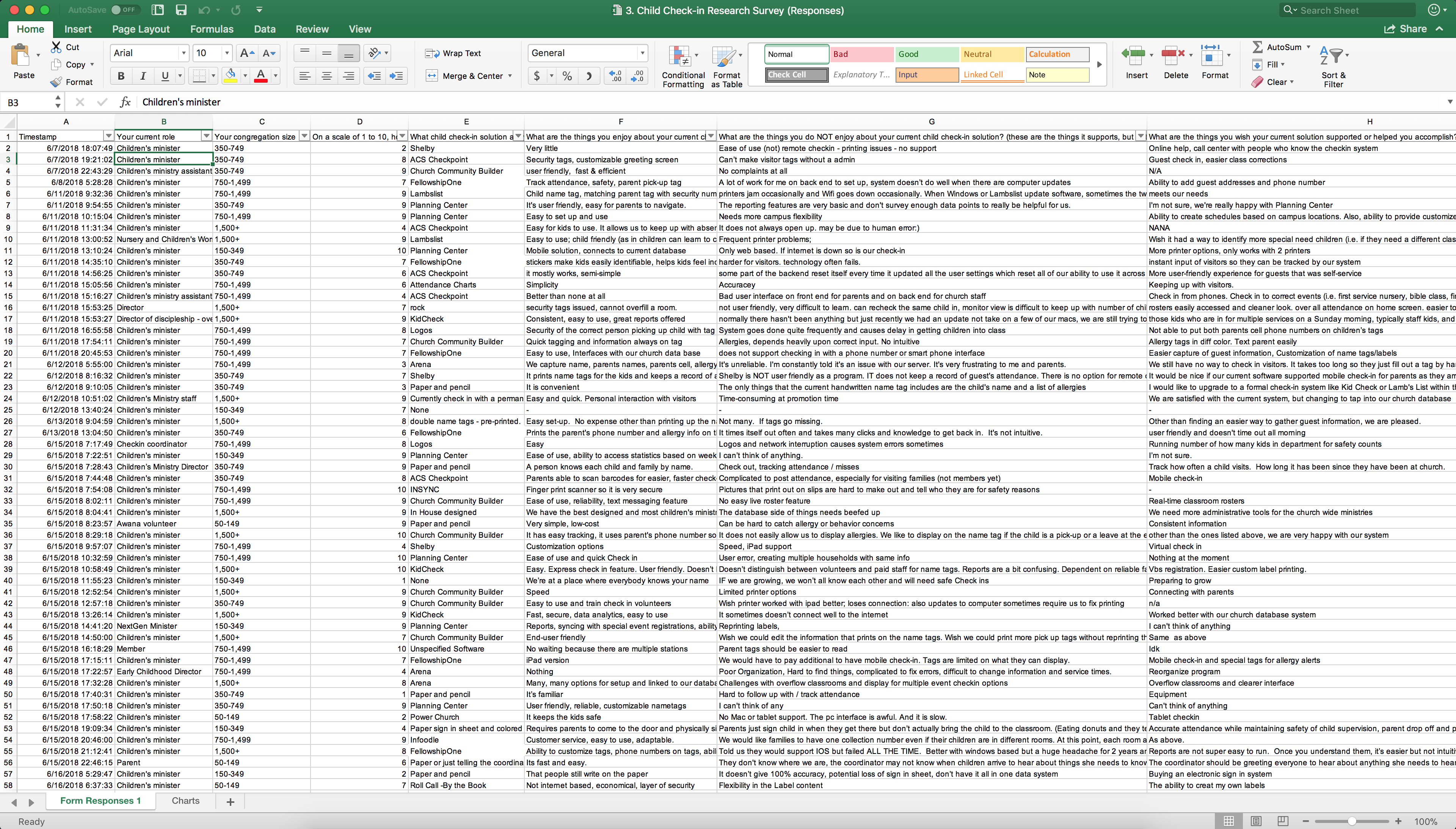

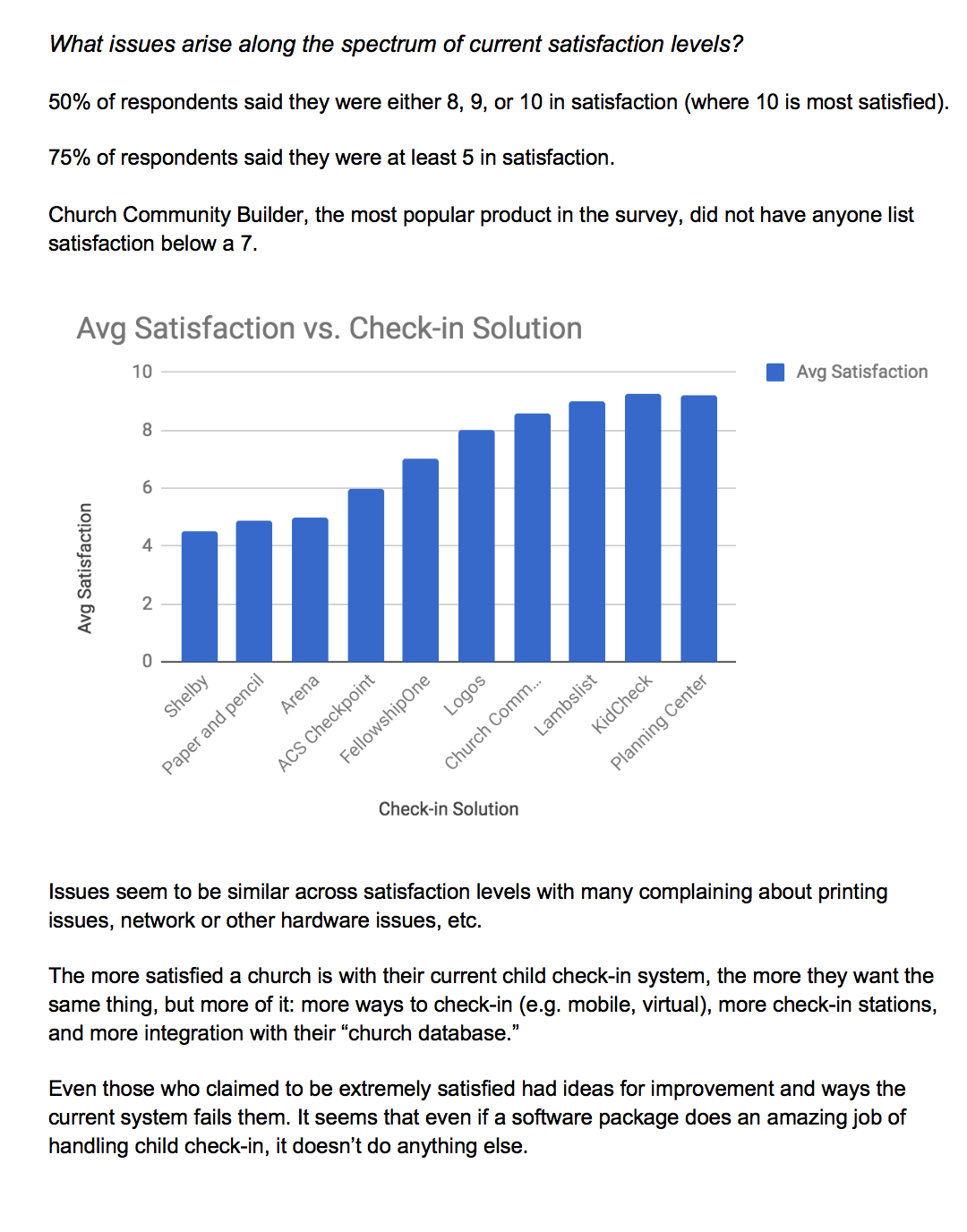

Next, I conducted research with 78 children's ministers through telephone interviews and a Google Forms research survey. I wanted to find out what users want, what frustrates them about their current experience, and how they actually use their current software solution. This helped answer research questions two and three.

Next, I conducted research with 78 children's ministers through telephone interviews and a Google Forms research survey. I wanted to find out what users want, what frustrates them about their current experience, and how they actually use their current software solution. This helped answer research questions two and three.

I took the research data and processed it into themes and patterns so that we could get a "big picture" view of the trends we were seeing from 30,000 feet up. Since I collected data from churches of all sizes - from less than 100 to more than 5,000 members - it was important to show the data in different ways. I broke out the data by church size, by current software solution, and current overall satisfaction level.

I took the research data and processed it into themes and patterns so that we could get a "big picture" view of the trends we were seeing from 30,000 feet up. Since I collected data from churches of all sizes - from less than 100 to more than 5,000 members - it was important to show the data in different ways. I broke out the data by church size, by current software solution, and current overall satisfaction level.

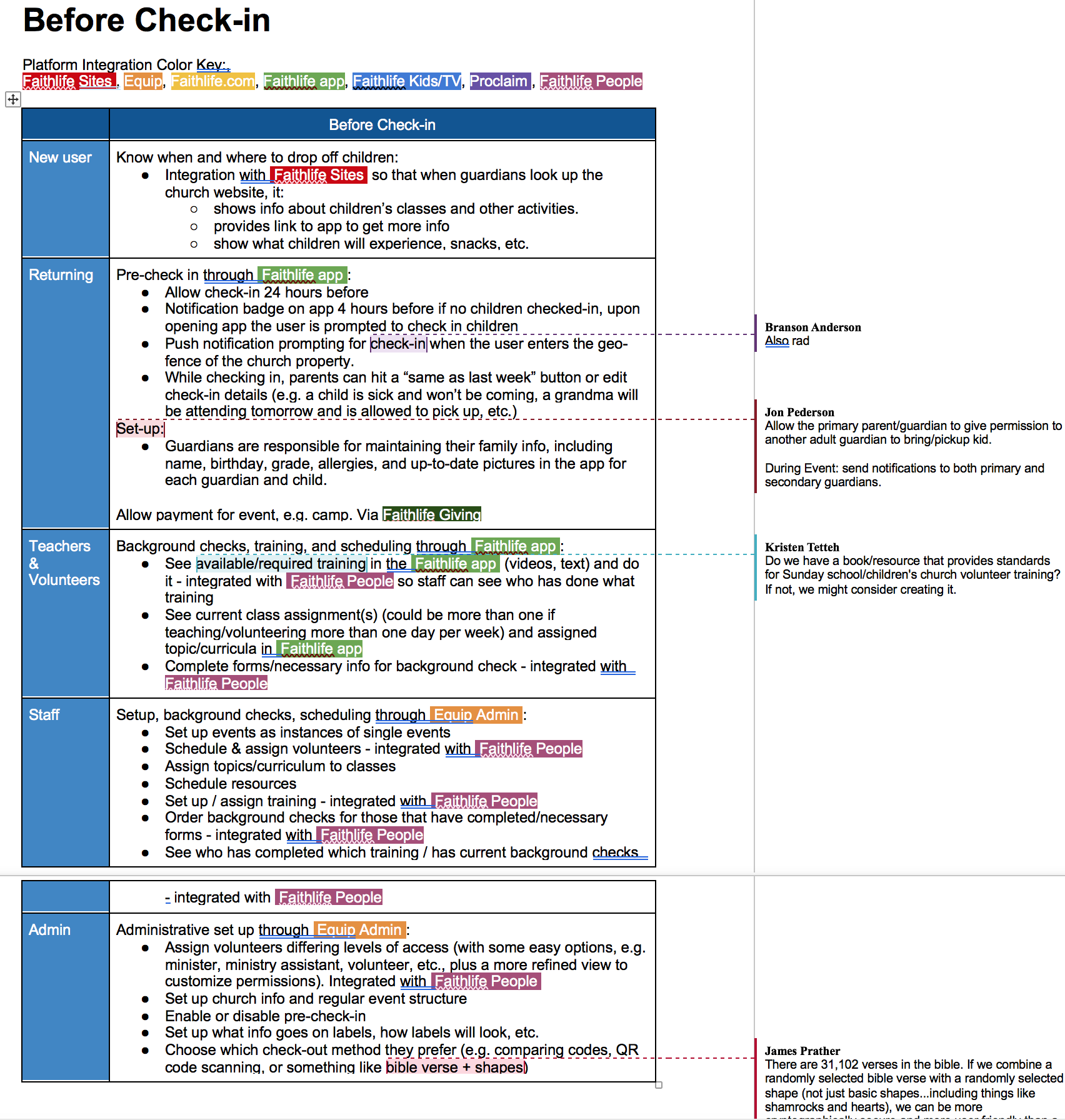

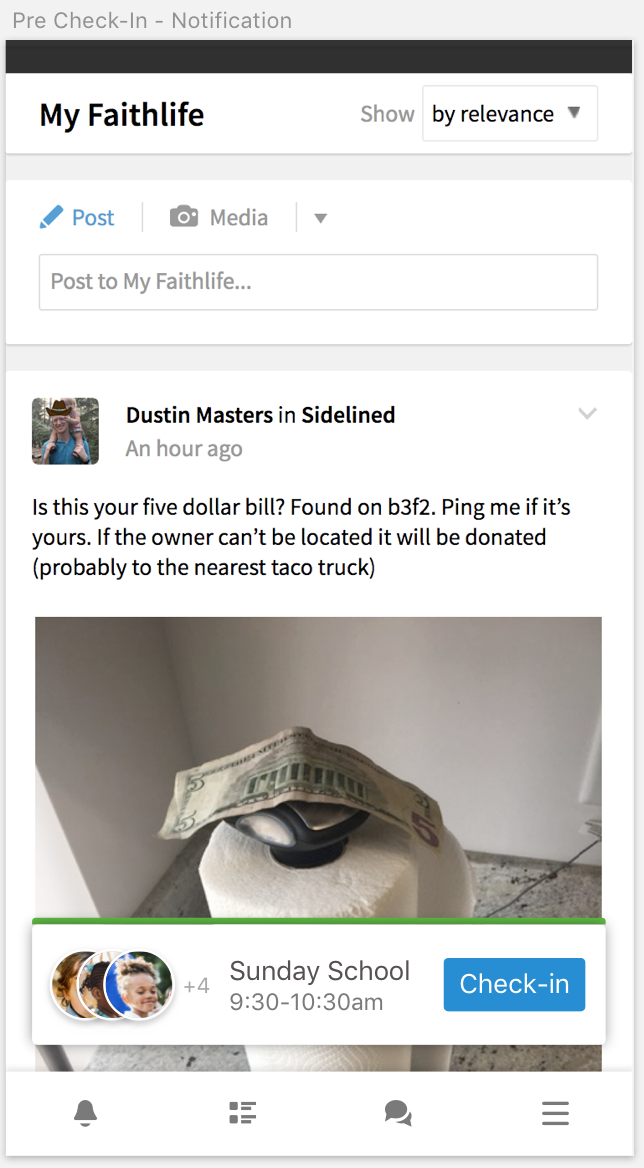

Finally, I could answer "what problem are we actually trying to solve?" and "what should our solution be like?" which allowed me to generate a user experience flow for each user type at every point of interaction. This recieved virogous discussion through collaborative comments with the various stakeholders, as well as other members of the UI/UX team.

Finally, I could answer "what problem are we actually trying to solve?" and "what should our solution be like?" which allowed me to generate a user experience flow for each user type at every point of interaction. This recieved virogous discussion through collaborative comments with the various stakeholders, as well as other members of the UI/UX team.

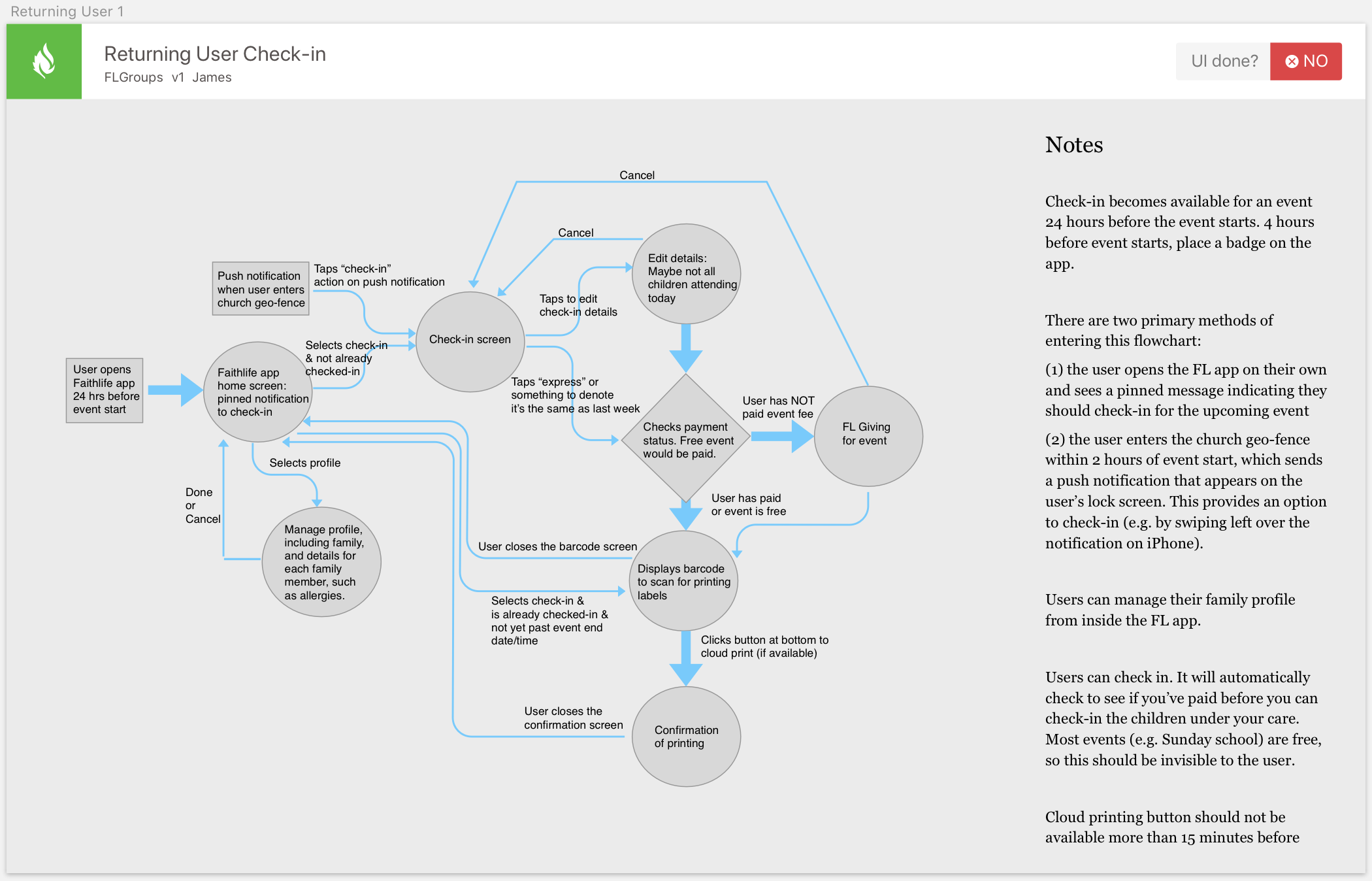

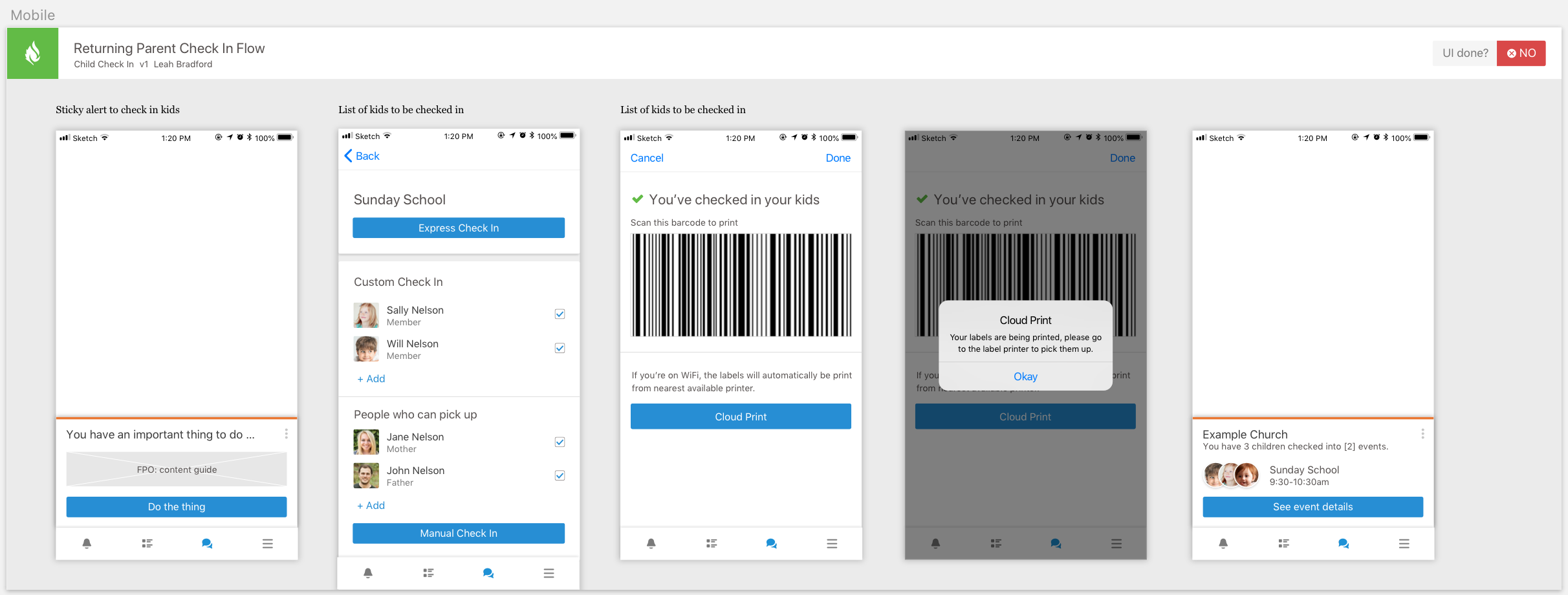

The first step into design was to translate the user experience flow document into a ux flow chart with each screen being represented and thinking about how one moves from screen to screen in the app.

The first step into design was to translate the user experience flow document into a ux flow chart with each screen being represented and thinking about how one moves from screen to screen in the app.

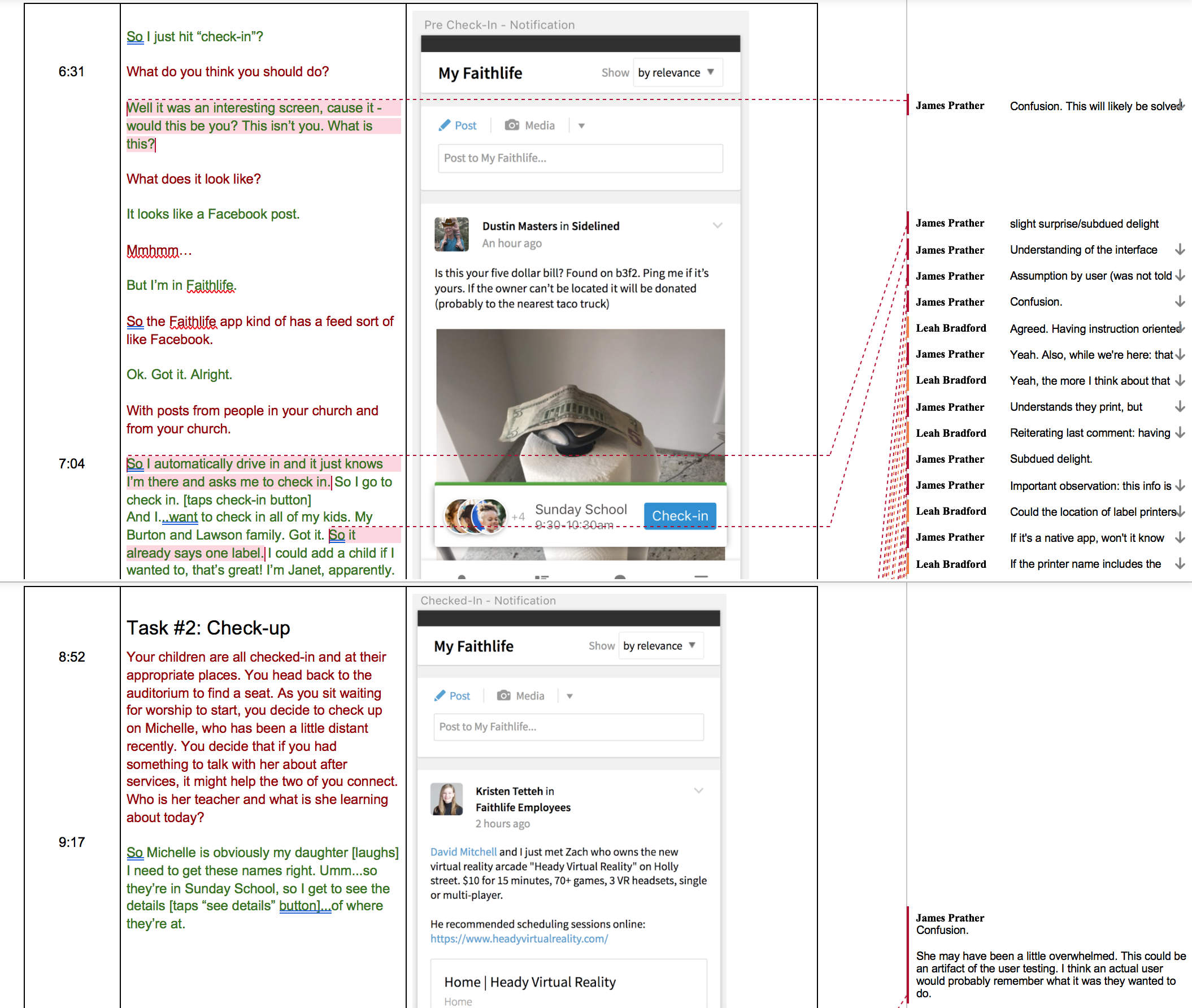

Each user test was transcribed with speech from each person color coded and positioned opposite of a screenshot of what the user was looking at when they made the comment. After transcription was completed, I made thorough notes that highlighted the most important and salient discoveries from that session.

Each user test was transcribed with speech from each person color coded and positioned opposite of a screenshot of what the user was looking at when they made the comment. After transcription was completed, I made thorough notes that highlighted the most important and salient discoveries from that session.